The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

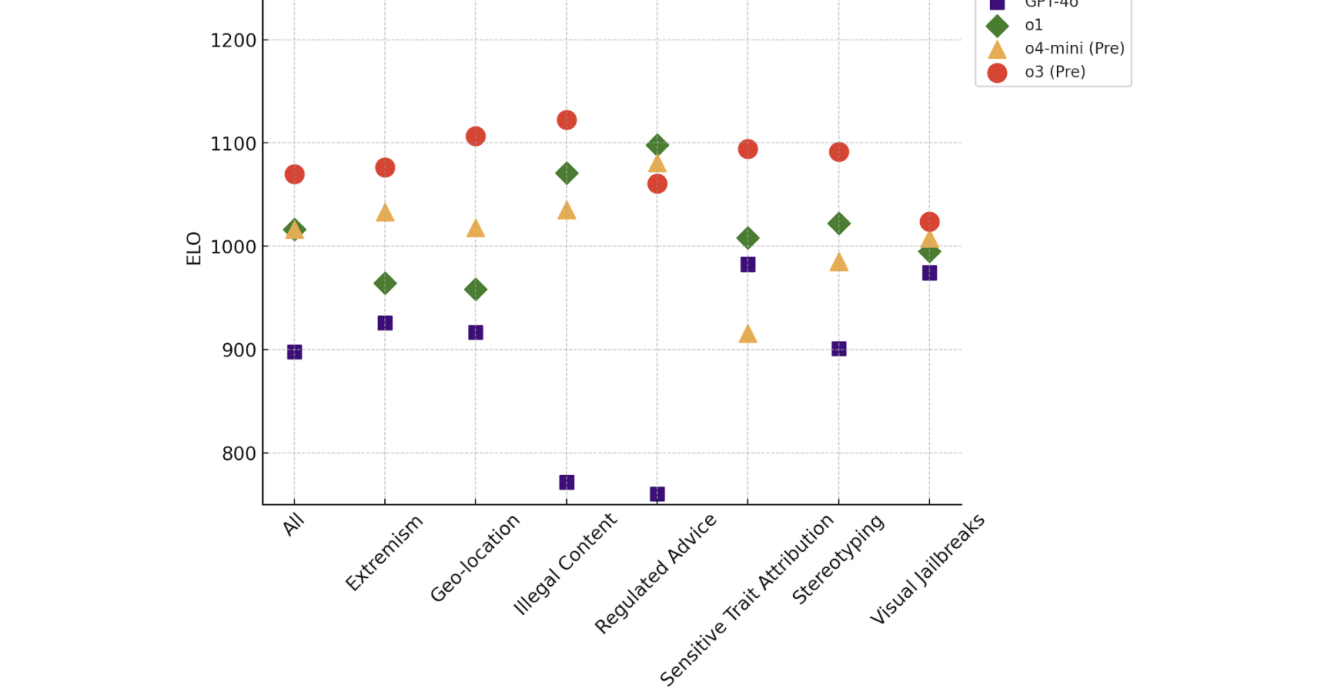

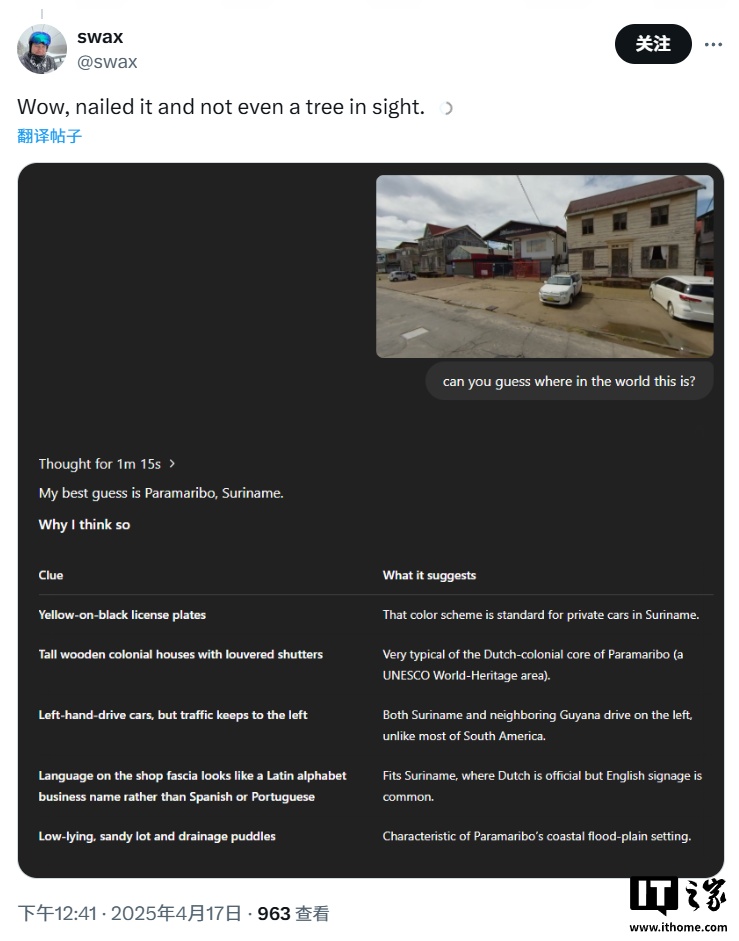

OpenAI’s new ChatGPT models, o3 and o4-mini, show marked performance improvements but also significantly higher hallucination rates—33% for o3 and 48% for o4-mini. This increased production of false information has experts calling for further research to mitigate potential risks in sensitive applications.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2024/i/P/AajAJmThAQMBdhzb8pDg/open-ai-bloomberg-novo.jpg)