The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

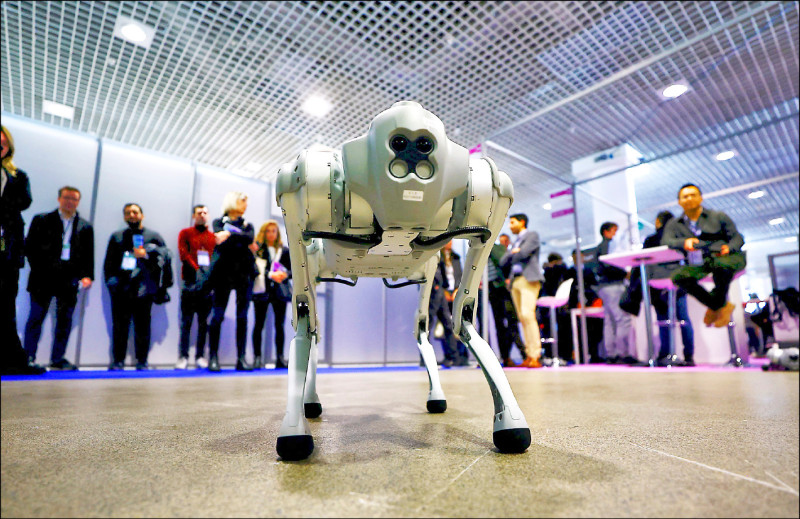

A US congressional committee urged investigation and sanctions against Chinese robotics firm Unitree over hidden “CloudSail” backdoors in its quadruped robots used by US prisons, police and military, warning they could be remotely controlled to exfiltrate data and facilitate espionage, posing significant national security risks.[AI generated]