The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

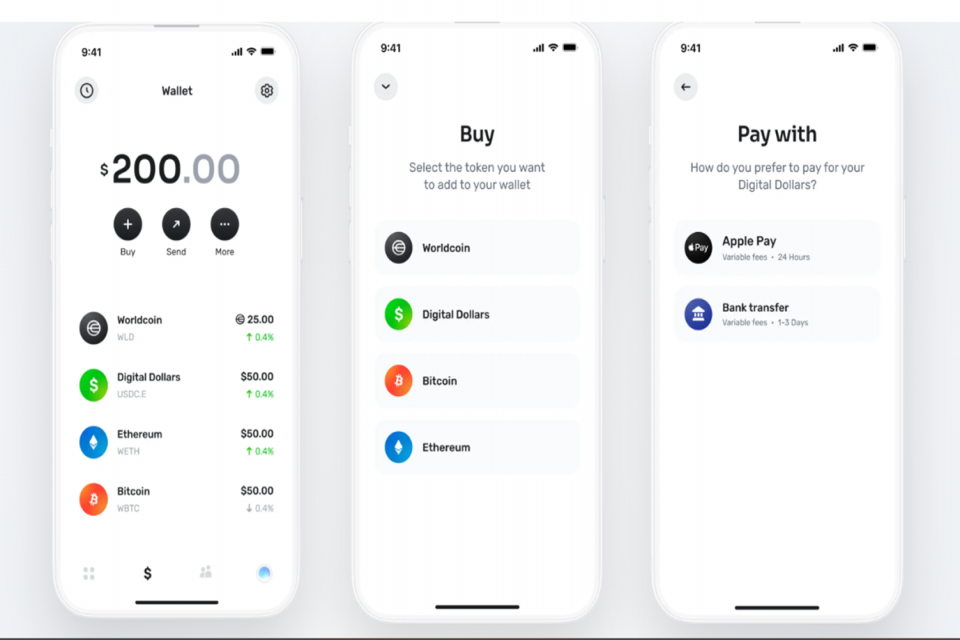

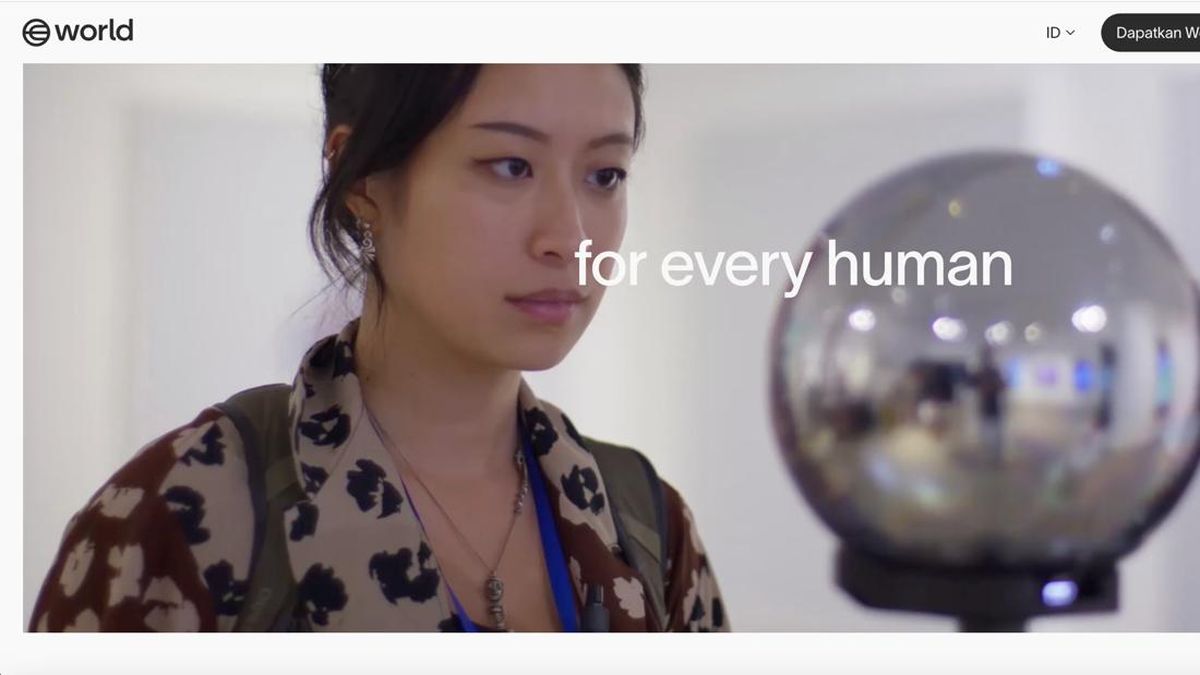

Worldcoin’s AI-driven iris scanning system, offering crypto rewards, has been suspended in Indonesia, Kenya, Hong Kong, Portugal, and Spain over concerns about privacy breaches, data misuse, and potential human rights violations. Regulatory authorities halted the service following public complaints and legal concerns over biometric data collection.[AI generated]

:strip_icc():format(jpeg)/kly-media-production/medias/4740423/original/048222400_1707701835-fotor-ai-2024021283125.jpg)

:strip_icc():format(jpeg):watermark(kly-media-production/assets/images/watermarks/liputan6/watermark-color-landscape-new.png,1100,20,0)/kly-media-production/medias/5208778/original/026513900_1746417126-photo_2025-05-05_10-45-39.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/4740422/original/078699100_1707701814-fotor-ai-2024021283356.jpg)

/data/photo/2025/05/05/681832086641a.png)

/data/photo/2025/05/05/681815f571035.jpeg)

:strip_icc():format(jpeg)/kly-media-production/medias/5209213/original/068985800_1746427783-WhatsApp_Image_2025-05-05_at_13.49.34_07c647ef.jpg)

/data/photo/2025/05/06/6819732111f5b.png)

:strip_icc():format(jpeg)/kly-media-production/medias/4350265/original/051288500_1678243458-Crypto_6.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5209769/original/027426300_1746442791-673_x_373_rev__10_.jpg)

/data/photo/2025/05/05/681832086641a.png)

/data/photo/2025/01/03/6777c1f8921da.jpg)

/data/photo/2025/05/08/681c9f498e86f.jpeg)

:strip_icc():format(jpeg)/kly-media-production/medias/5208886/original/041372400_1746420451-Orb_2.jpg)

:quality(80)/https://cdn-dam.kompas.id/photo/ori/2019/01/10/5855abd1-41d2-43f3-9e2d-fdc2ddba230b.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5034791/original/097877700_1733293970-Alexander_Sabar.jpg)

/data/photo/2025/05/06/6819732111f5b.png)

/data/photo/2025/05/05/681836d37babb.jpeg)

/data/photo/2025/01/22/6790f7698b2fa.jpg)