The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

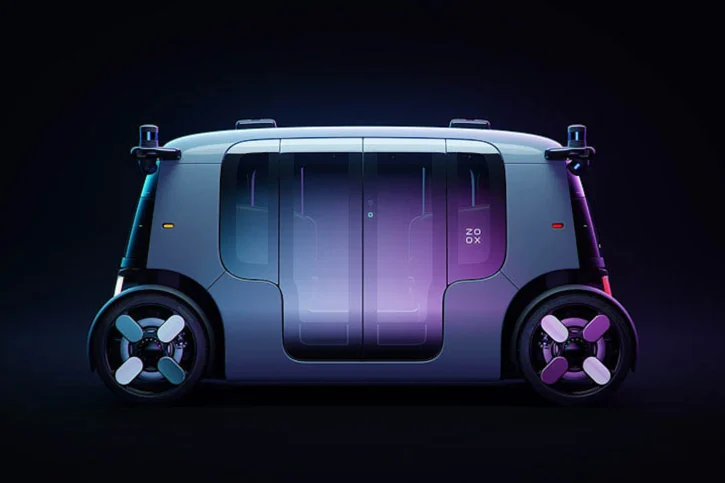

Amazon's Zoox recalled 270 self-driving vehicles following a Las Vegas incident where an AI-powered robotaxi collided with a passenger vehicle. The crash, caused by a software prediction error in the automated driving system, led to a safety review and the deployment of a critical software update.[AI generated]