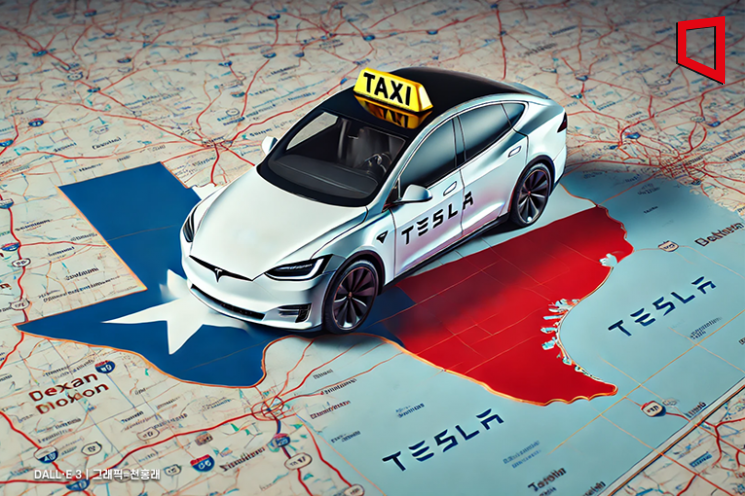

An AI system is clearly involved: Tesla's Full Self-Driving (FSD) software, which is an AI system designed for autonomous driving. The event concerns the use and potential malfunction of this AI system, as the NHTSA is investigating collisions linked to FSD in poor visibility conditions. The planned robotaxi service would use this AI system potentially without human supervision, raising plausible safety risks. However, no new harm has yet occurred from the robotaxi service itself, as it is not yet launched, and the investigation is ongoing. Therefore, this event represents an AI Hazard, as the AI system's use could plausibly lead to harm (e.g., accidents) if deployed without sufficient safety assurances. The event is not Complementary Information because it focuses on the investigation and potential risks rather than updates or responses to past incidents. It is not an AI Incident because no new harm has been reported from the robotaxi service yet.