The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

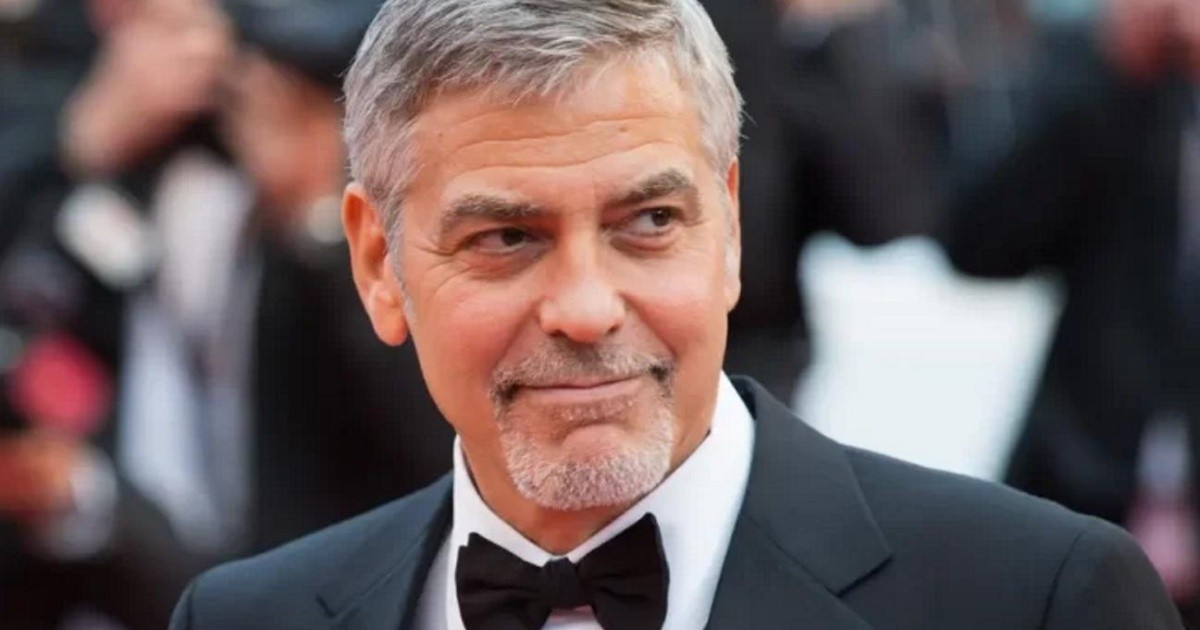

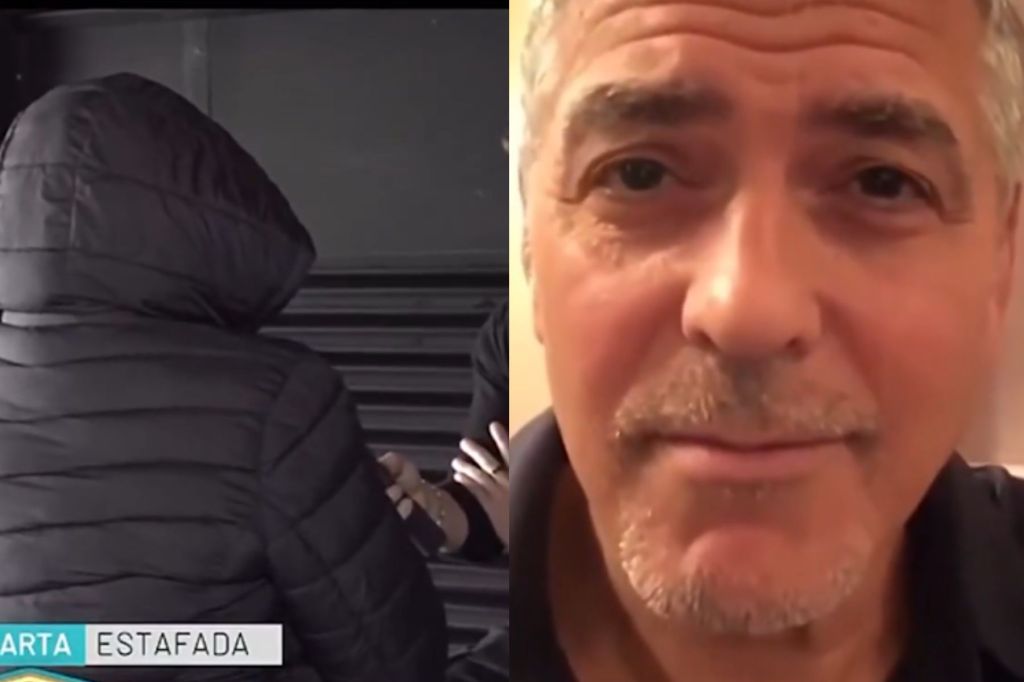

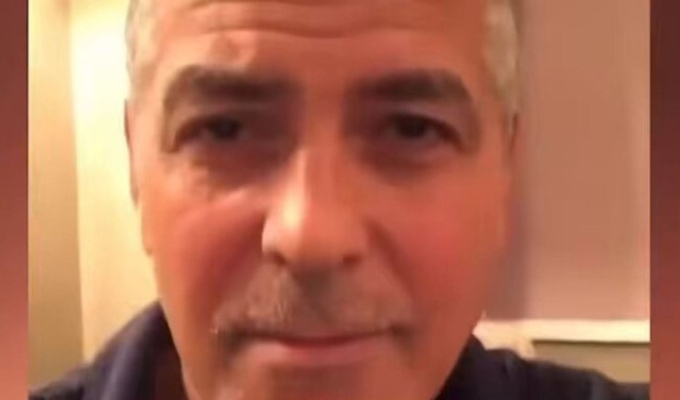

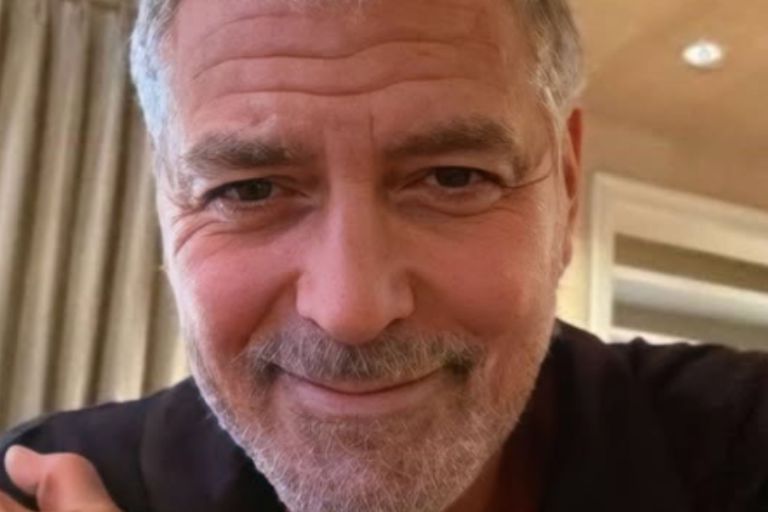

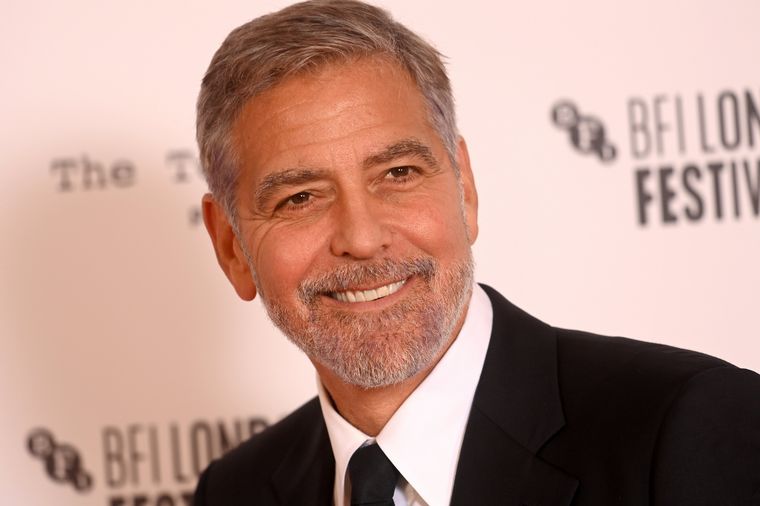

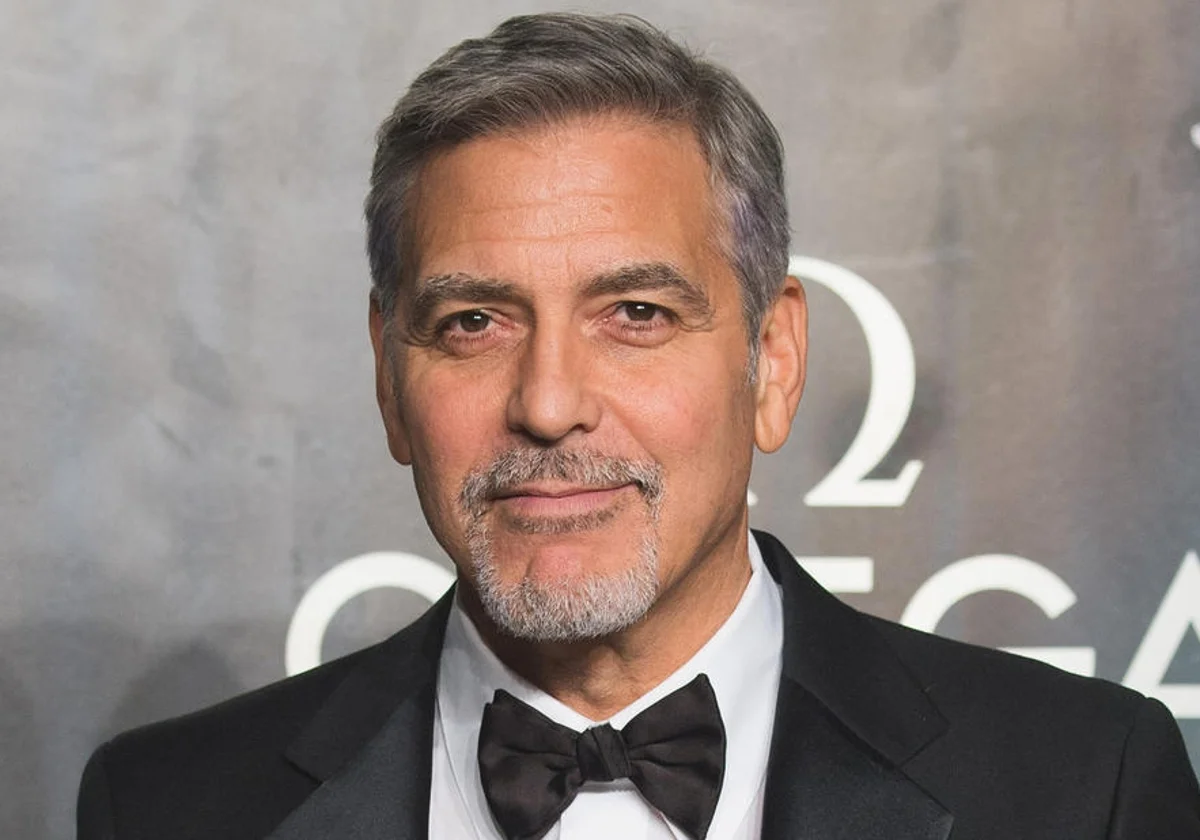

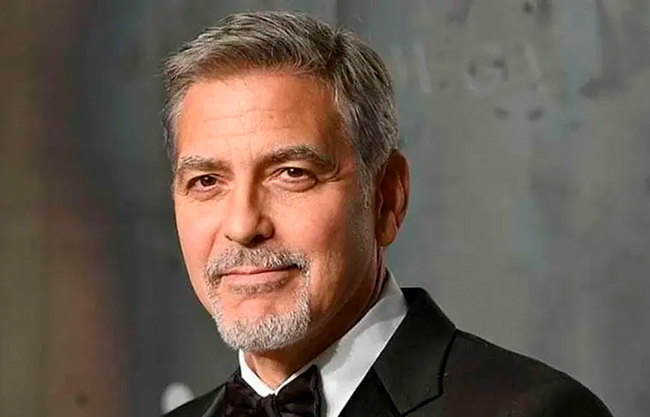

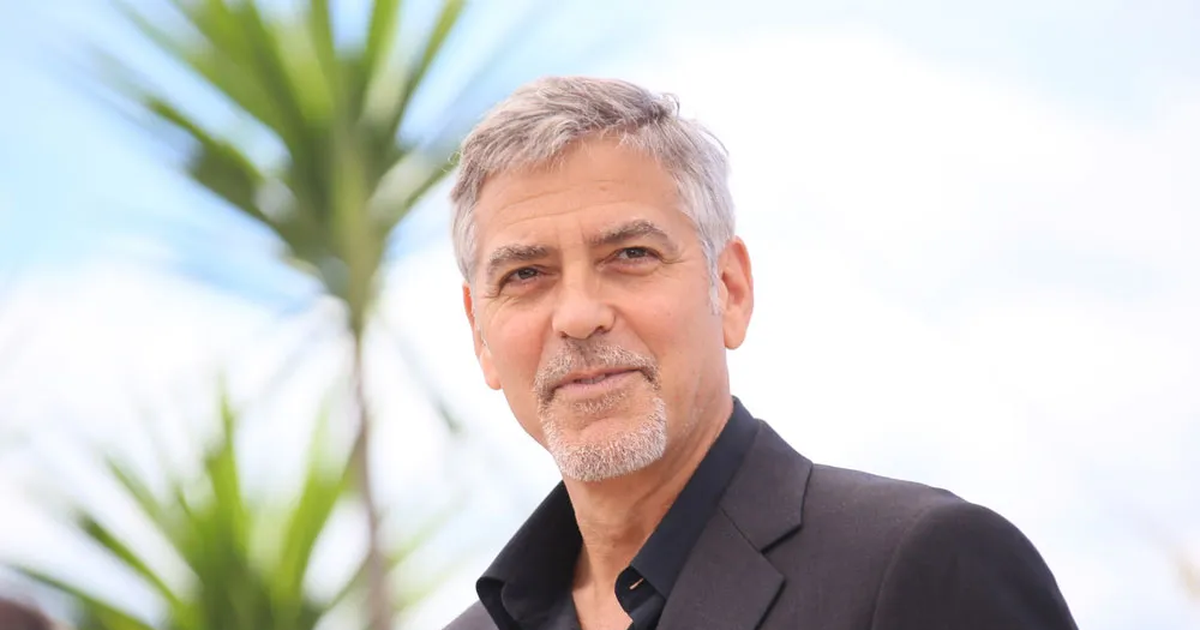

An Argentine woman was deceived over a month-long online interaction when a scammer used AI-generated deepfake videos and verified profiles to impersonate George Clooney. Believing the persona was real, she lost over $15,000 through fraudulent requests for fan club access and job opportunities.[AI generated]