The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

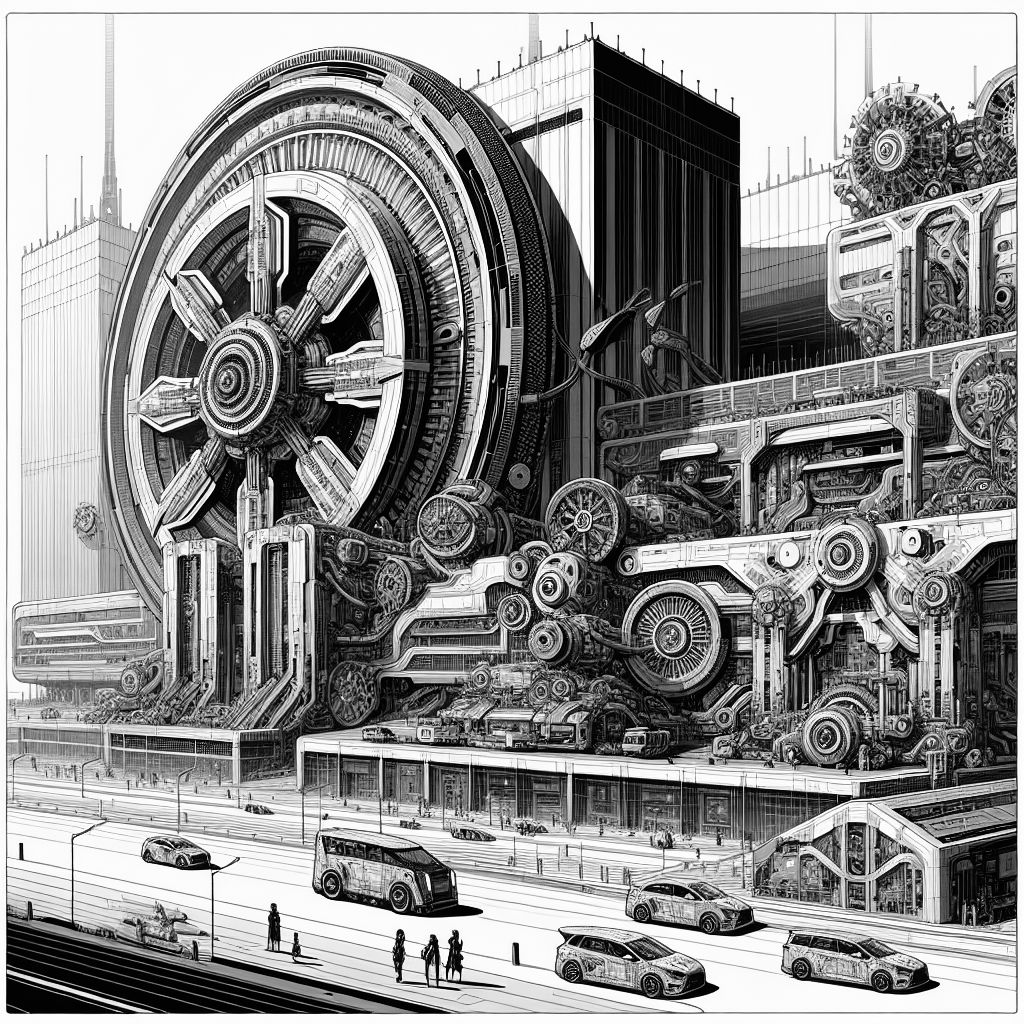

Waymo recalled over 1,200 self-driving taxis following a software glitch that led to collisions with road barriers, such as chains and gates, across several cities. The issue, identified through 16 reported incidents and additional near-collisions, prompted software updates and an NHTSA investigation, though no serious injuries were reported.[AI generated]