The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

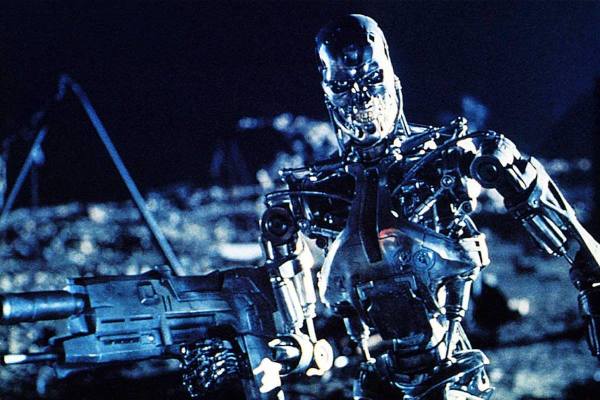

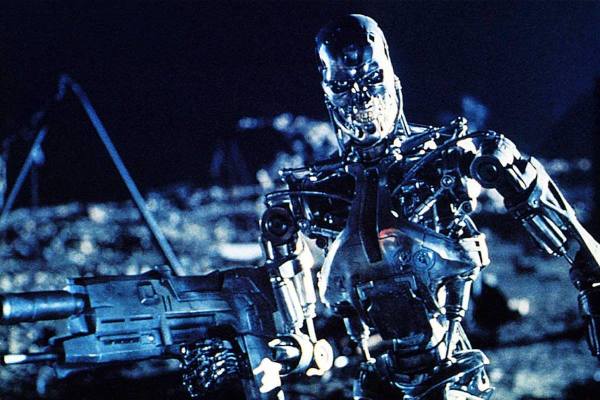

Global discussions at the United Nations highlight the rapid development of AI-enabled autonomous weapons. Experts warn that unchecked advancements could trigger an arms race, accountability issues, and potential civilian harm, urging urgent international regulation to prevent future risks.[AI generated]