The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

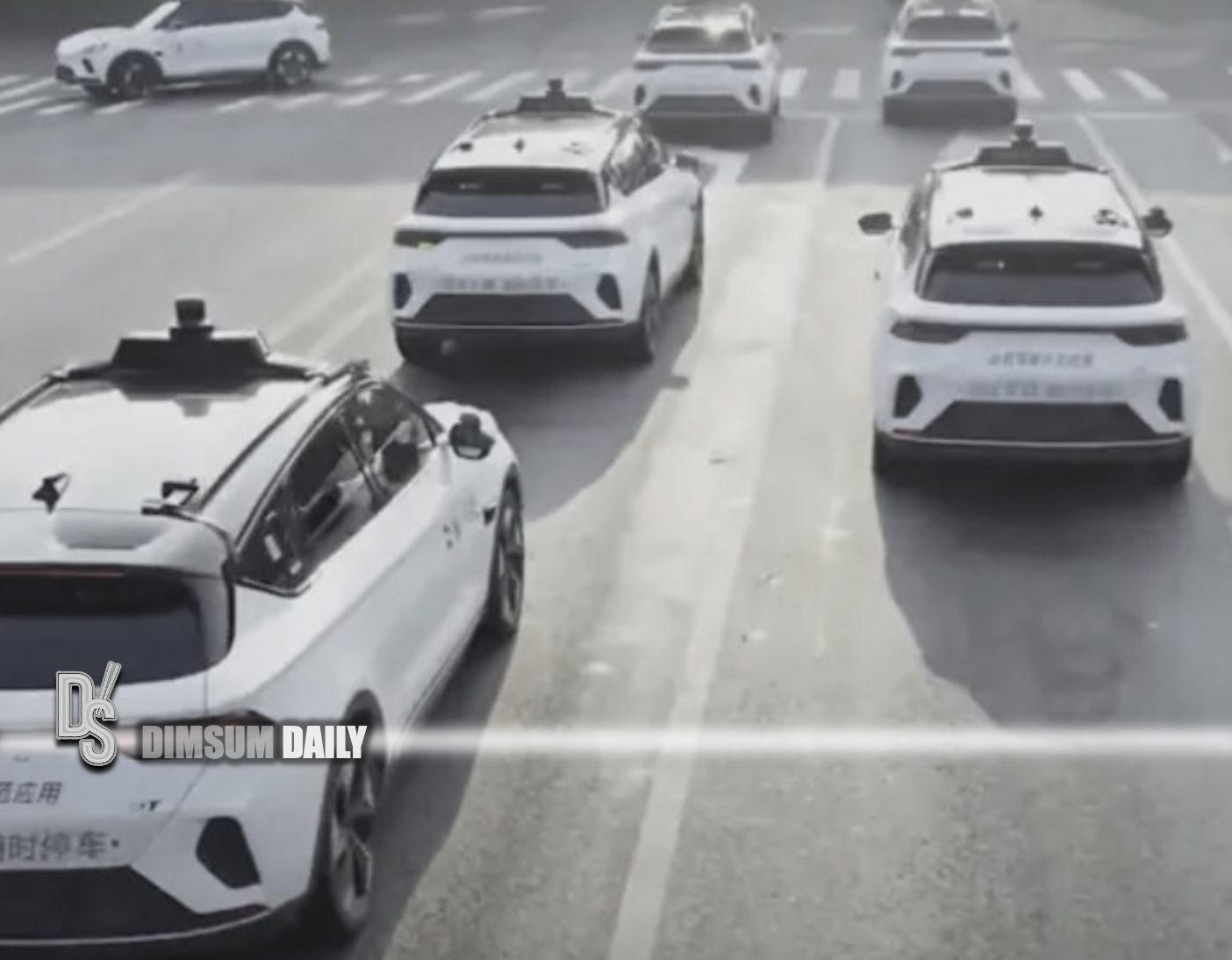

Baidu plans to launch its Apollo Go driverless taxi service in Europe, beginning tests in Switzerland and Turkey by year-end. The Chinese tech giant is in talks with Swiss Post to set up a local entity and navigate conservative Level-4 AV regulations, as it faces competition from Uber, Tesla, and Waymo.[AI generated]