The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

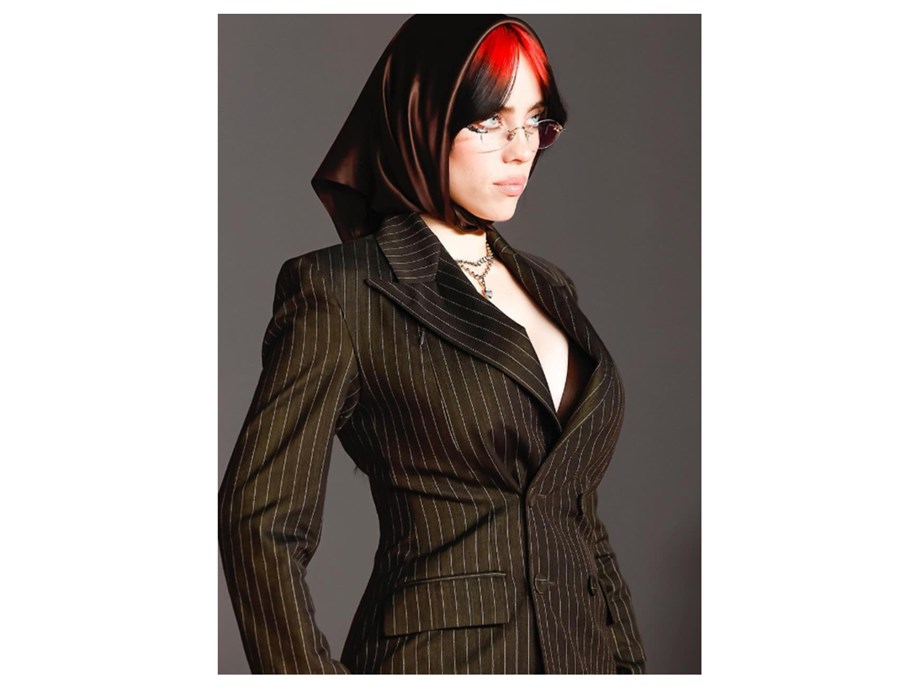

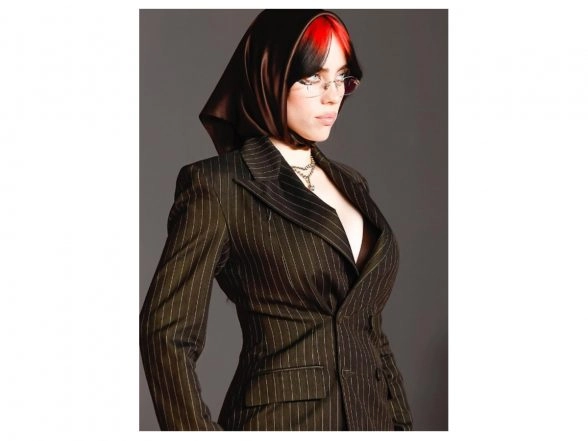

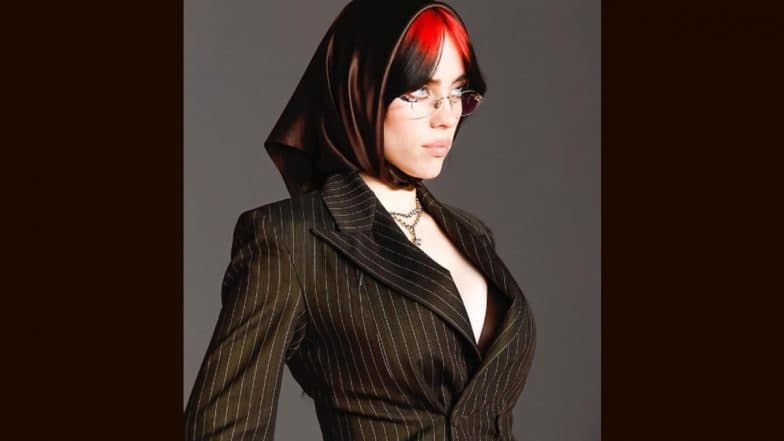

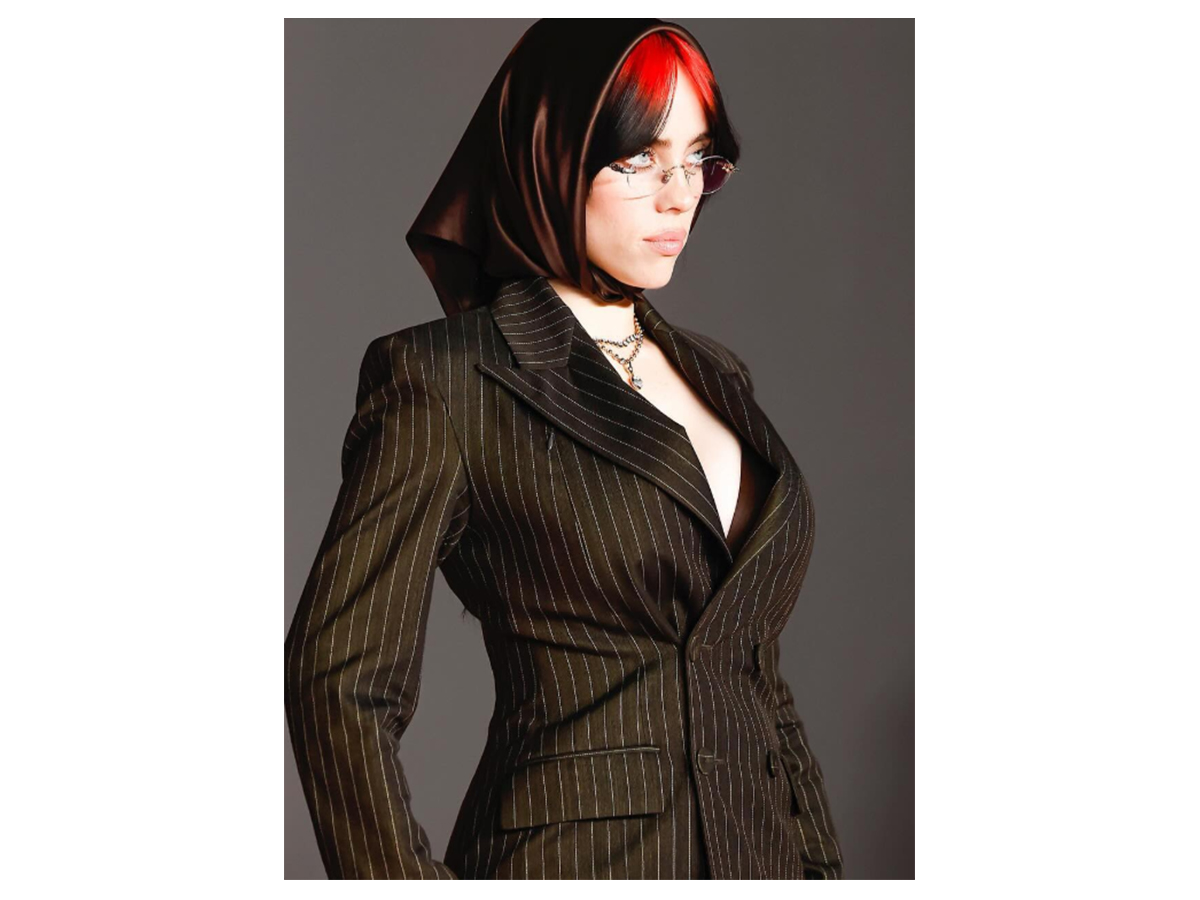

Multiple reports detail how AI-generated images falsely depicted Billie Eilish at the 2025 Met Gala. The singer, who was performing in Europe, publicly debunked these deepfakes on social media, highlighting concerns over misrepresentation, potential defamation, and violations of intellectual property rights through advanced AI technology.[AI generated]

:max_bytes(150000):strip_icc():focal(749x0:751x2)/billie-eilish-met-gala-2022-051525-90c75c7fd43a4371bb124142f6f51e47.jpg)