The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

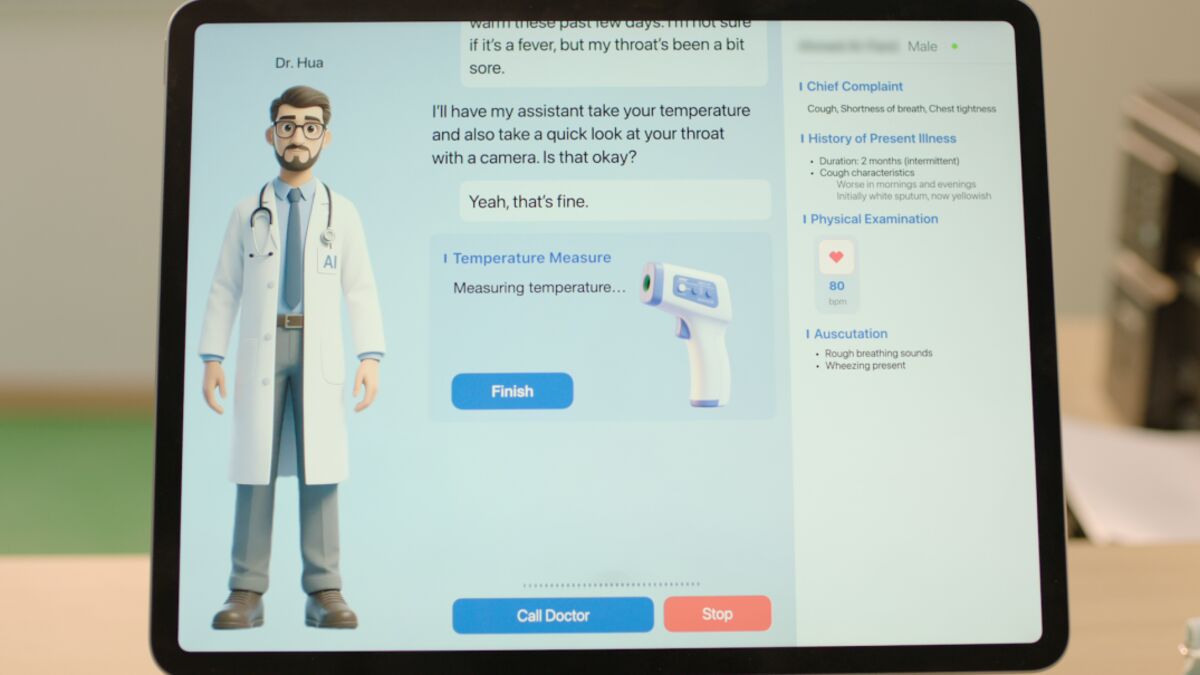

Shanghai-based Synyi AI, in partnership with Almoosa Health Group, has launched a clinic in Saudi Arabia where the AI system 'Dr. Hua' diagnoses patients and prescribes treatments under human oversight. While early testing shows low error rates, the approach poses future risks if misdiagnoses or privacy issues occur.[AI generated]

.jpg)