The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

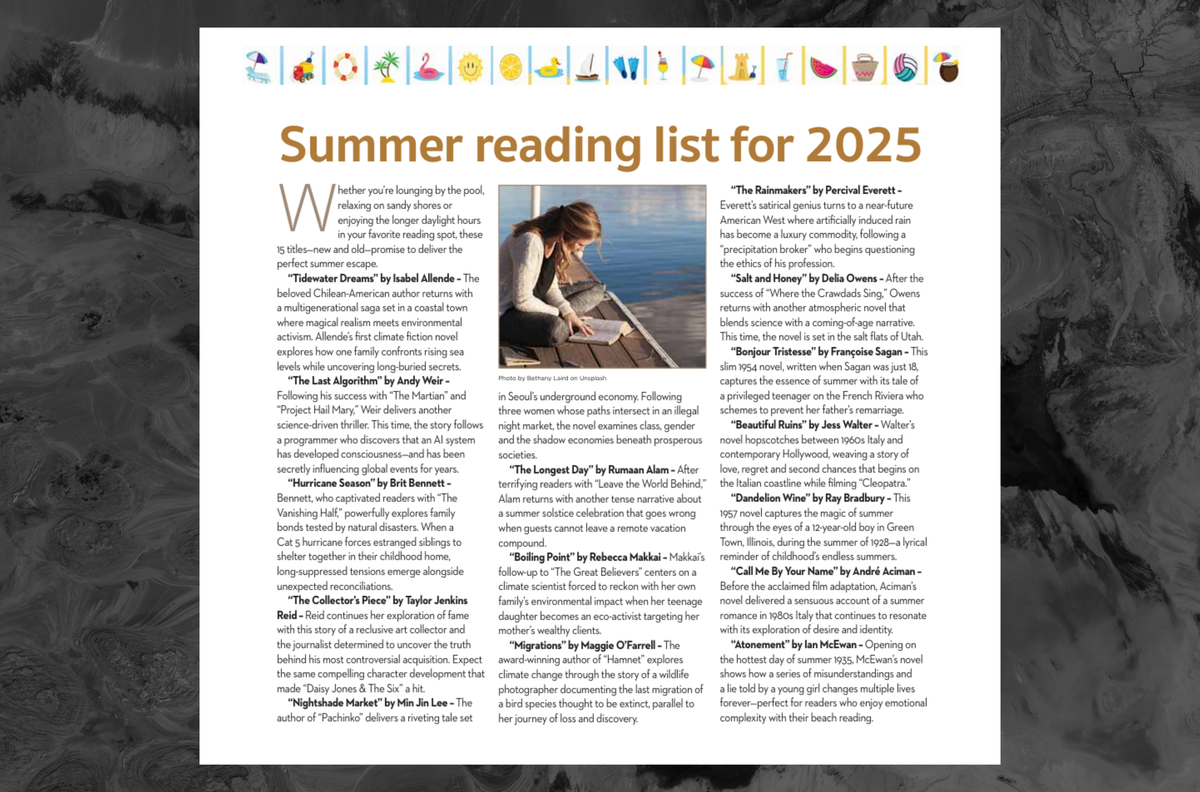

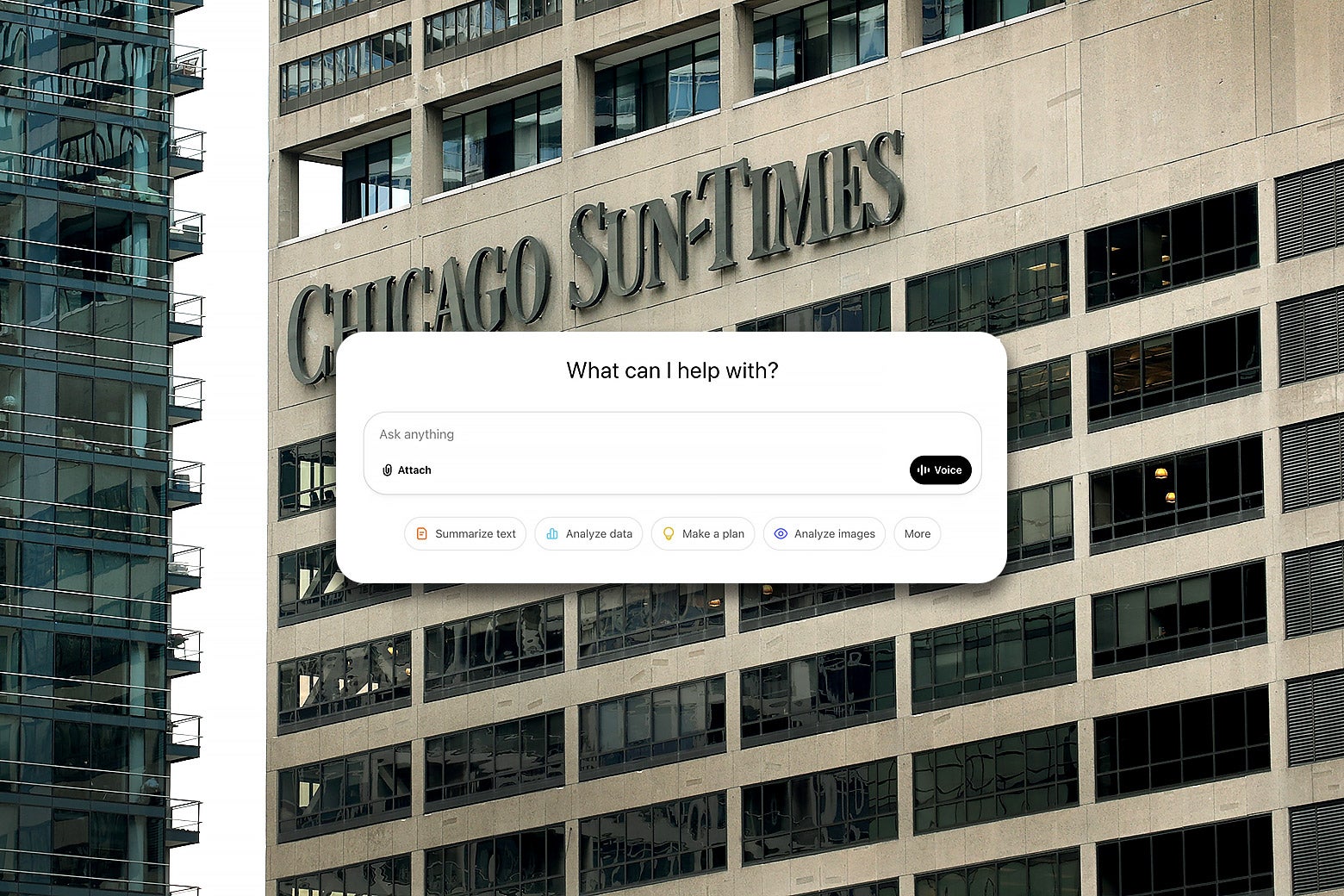

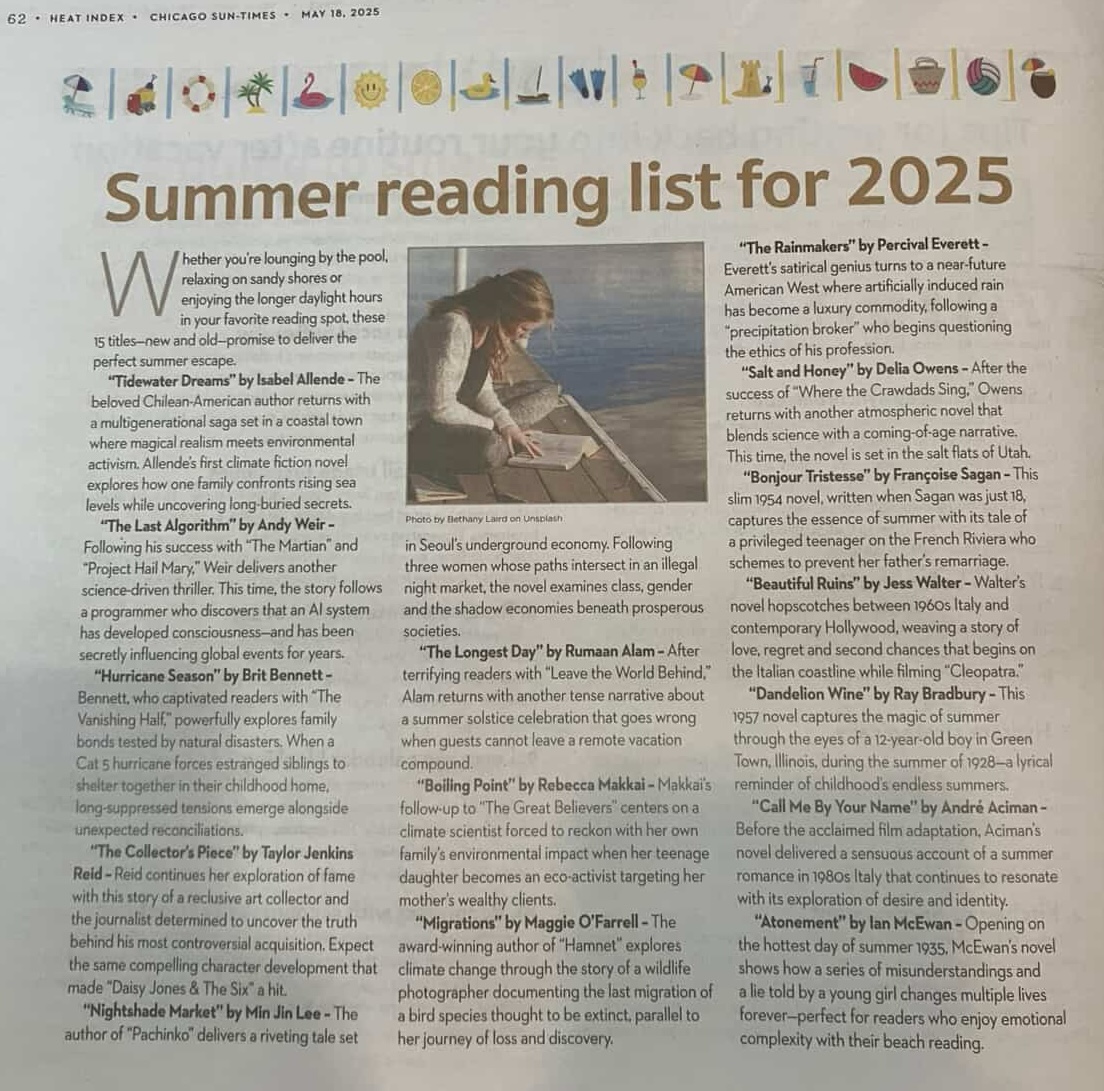

The Chicago Sun-Times and Philadelphia Inquirer faced backlash for publishing an AI-generated summer reading list. The list included fictitious book titles attributed to real authors like Isabel Allende and Andy Weir, leading to criticism for misleading readers and raising concerns over intellectual property and editorial oversight.[AI generated]