The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

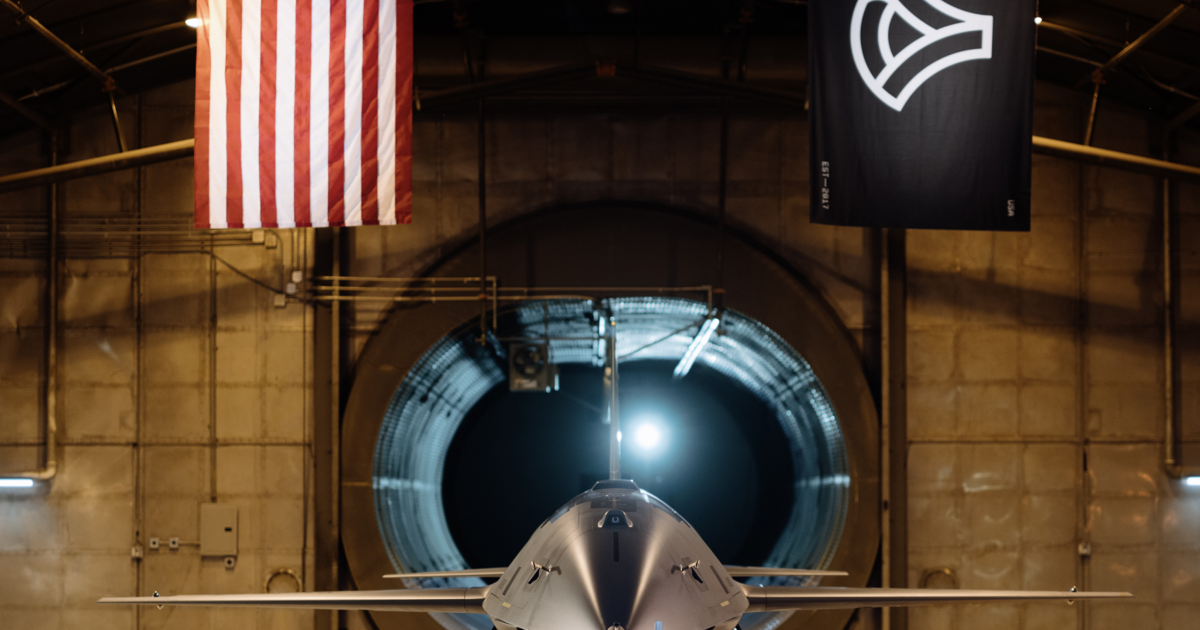

The U.S. Air Force and General Atomics have unveiled the YFQ-42A, an AI-enabled stealth unmanned combat aircraft, and commenced ground testing as part of the Collaborative Combat Aircraft program. Designed to fly alongside F-22s and F-35s, the drone marks a major advance in lethal autonomous capabilities with future battlefield risk.[AI generated]