The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

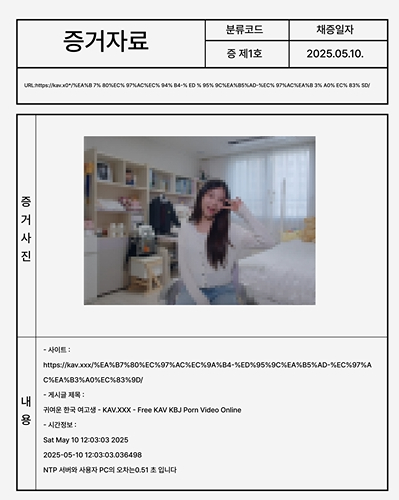

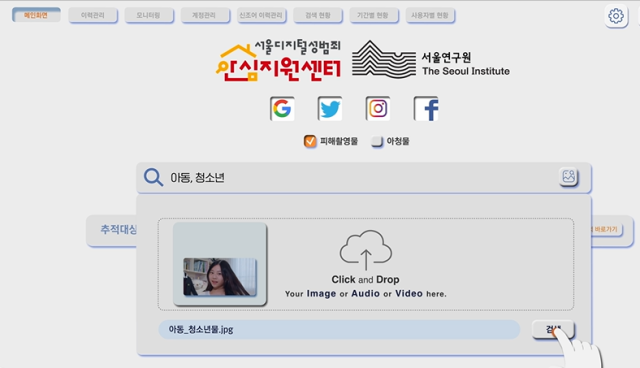

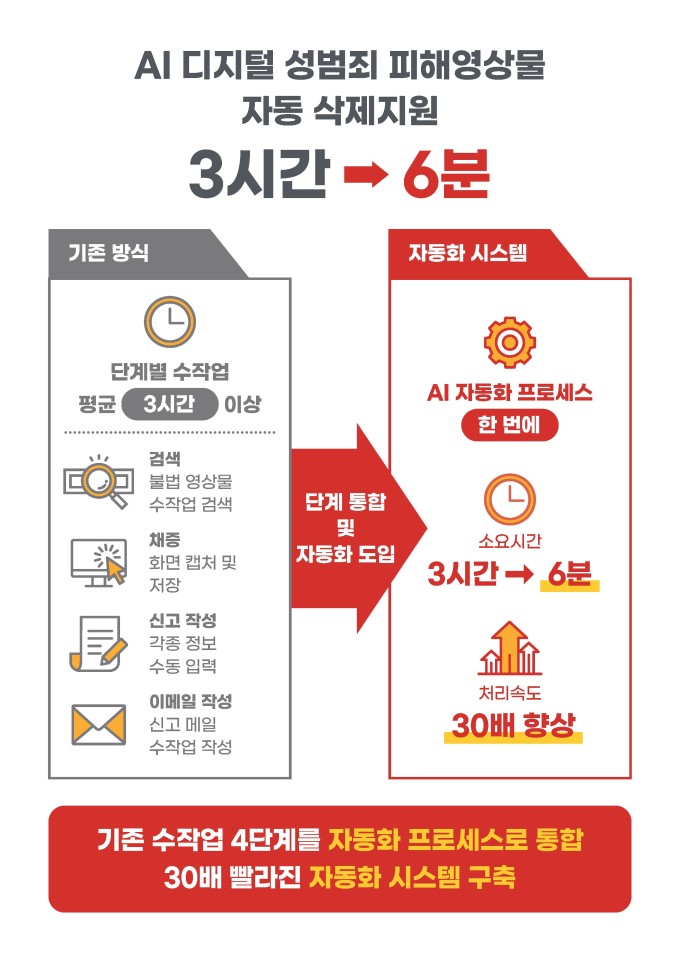

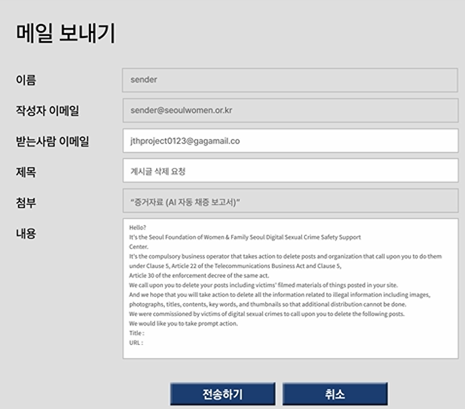

Seoul City and the Seoul Institute unveiled the nation’s first 24/7 ‘AI automatic deletion-reporting system’ for digital sexual exploitation videos. The AI detects illegal content, compiles evidence, and drafts multilingual removal requests, which are then reviewed by support officers. It slashes reporting time from around three hours to just six minutes.[AI generated]