The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

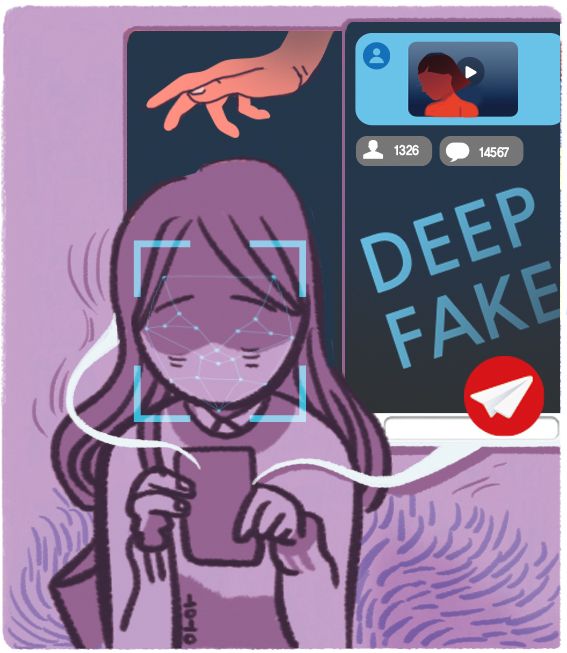

South Korean authorities arrested a high school student and charged 23 others for using AI deepfake technology to synthesize explicit images and videos by merging the faces of celebrities and ordinary women onto nude bodies. The crimes, distributed via Telegram, violated privacy and sexual exploitation laws.[AI generated]