The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

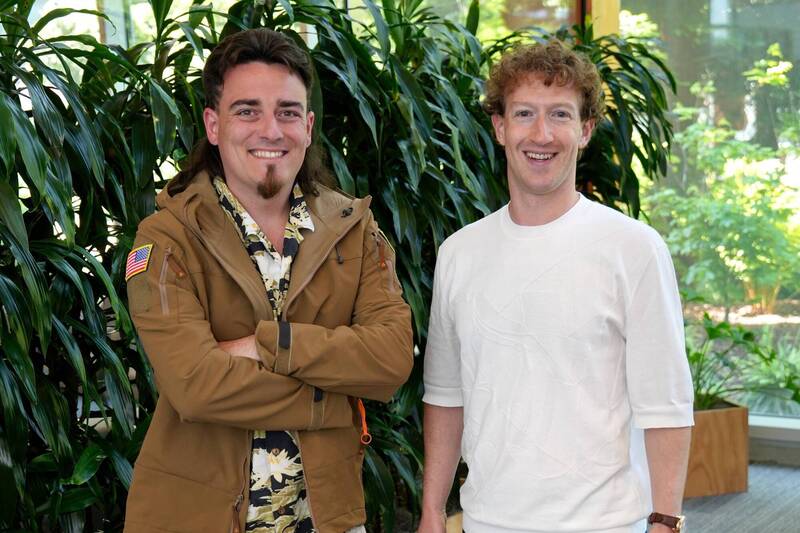

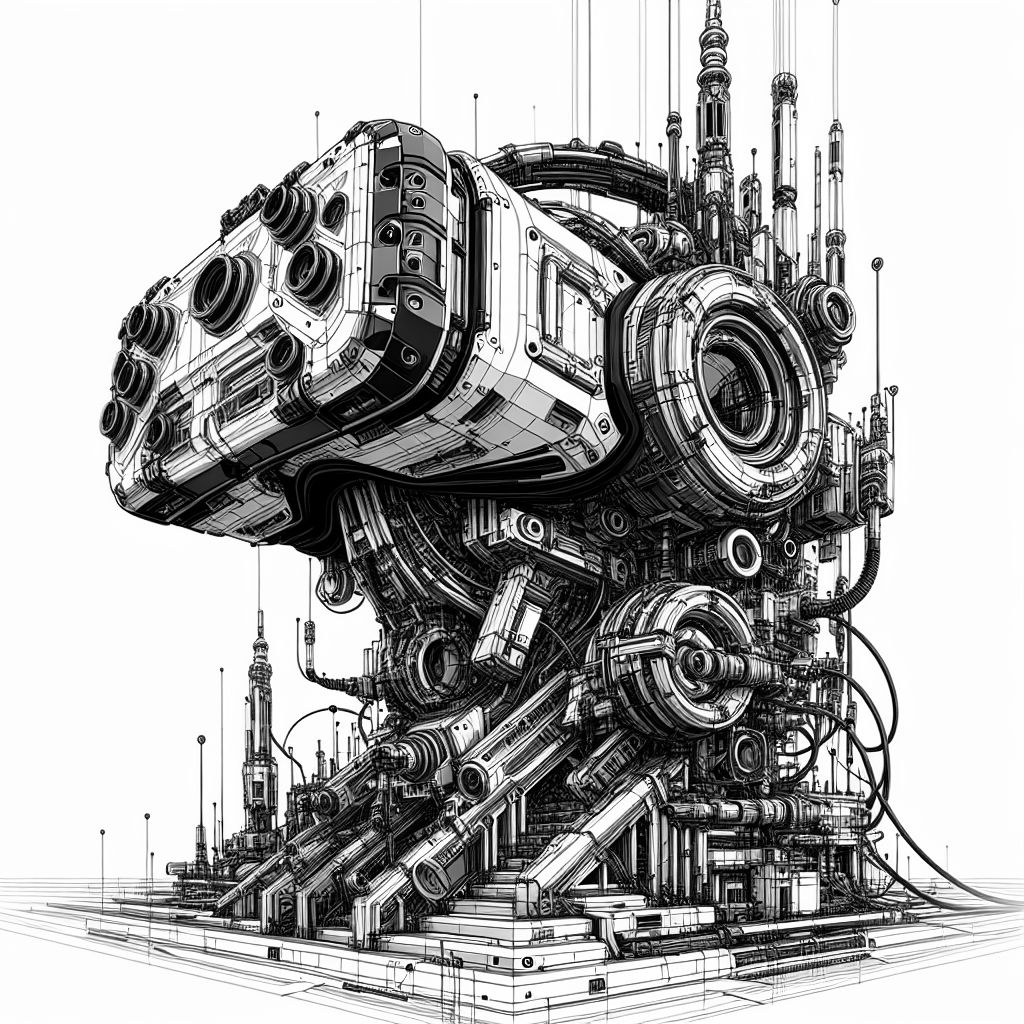

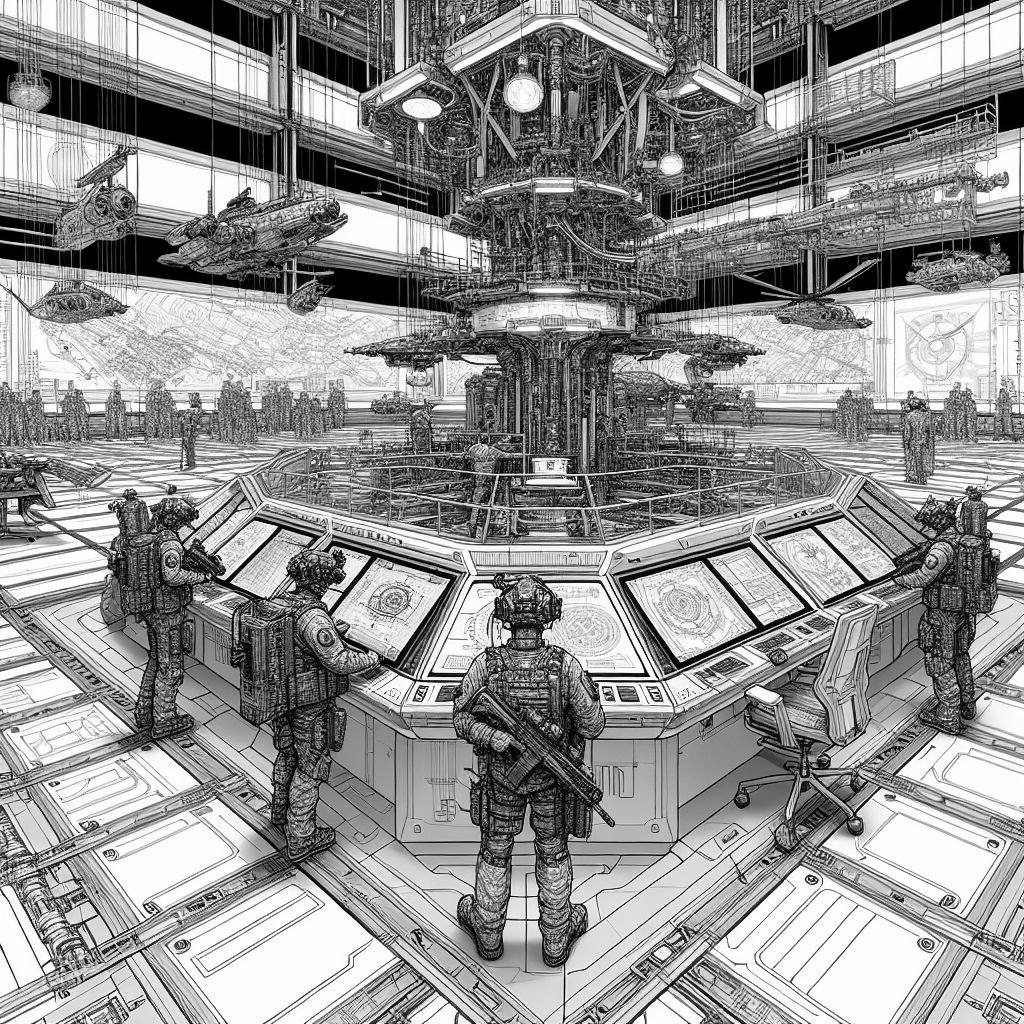

Meta and Anduril announced a joint project to develop an AI-powered VR/AR combat helmet called EagleEye for the US military. The device integrates advanced sensors and AI to enhance situational awareness and interact with autonomous systems, representing a significant leap into military AI technology.[AI generated]