The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

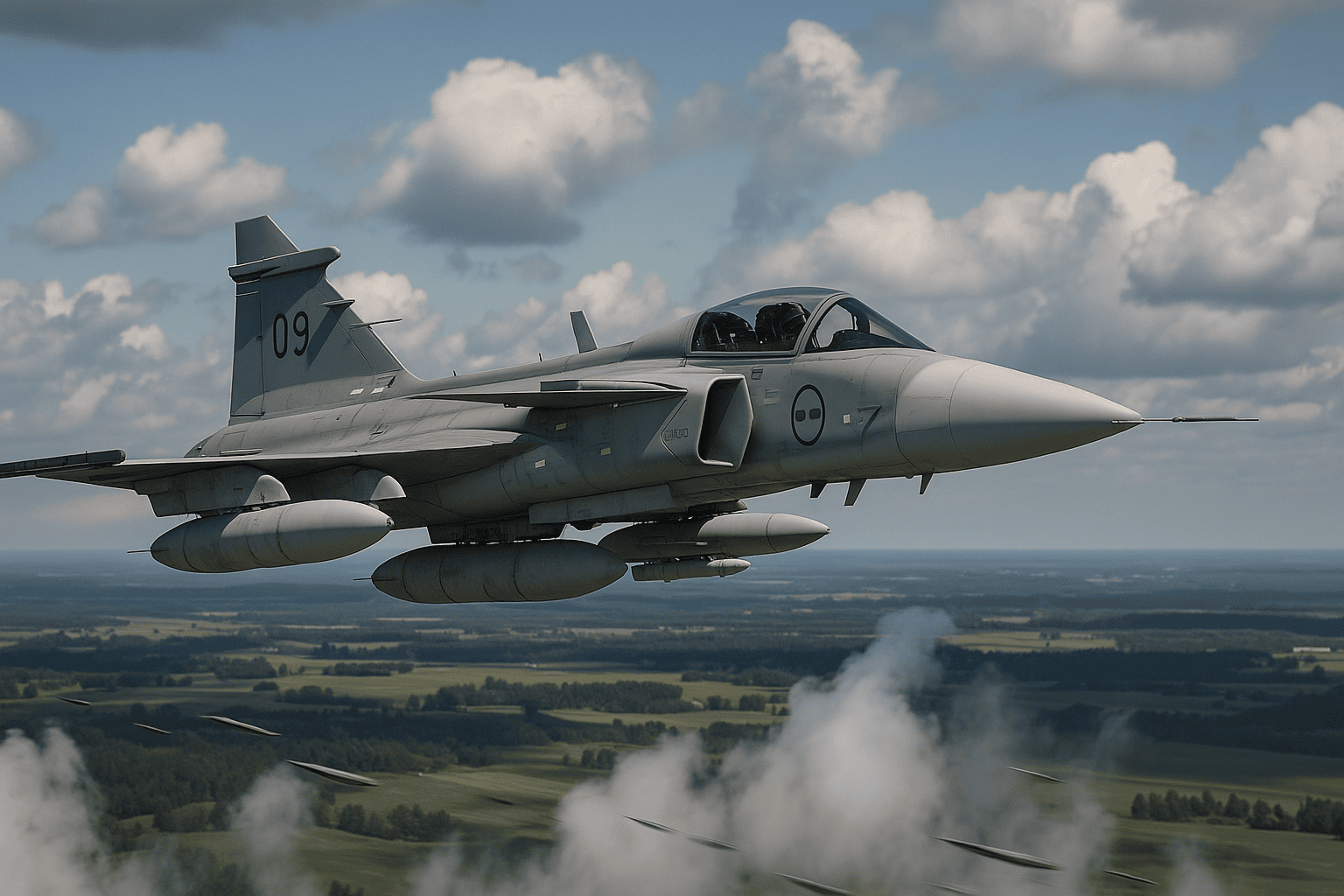

Swedish defense company Saab is developing an AI-driven unmanned combat aircraft, dubbed the 'Loyal Wingman,' designed to operate alongside manned fighter jets. With test flights planned for next year and expected market readiness by 2030, the system raises concerns over potential future AI hazards in military applications.[AI generated]