The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

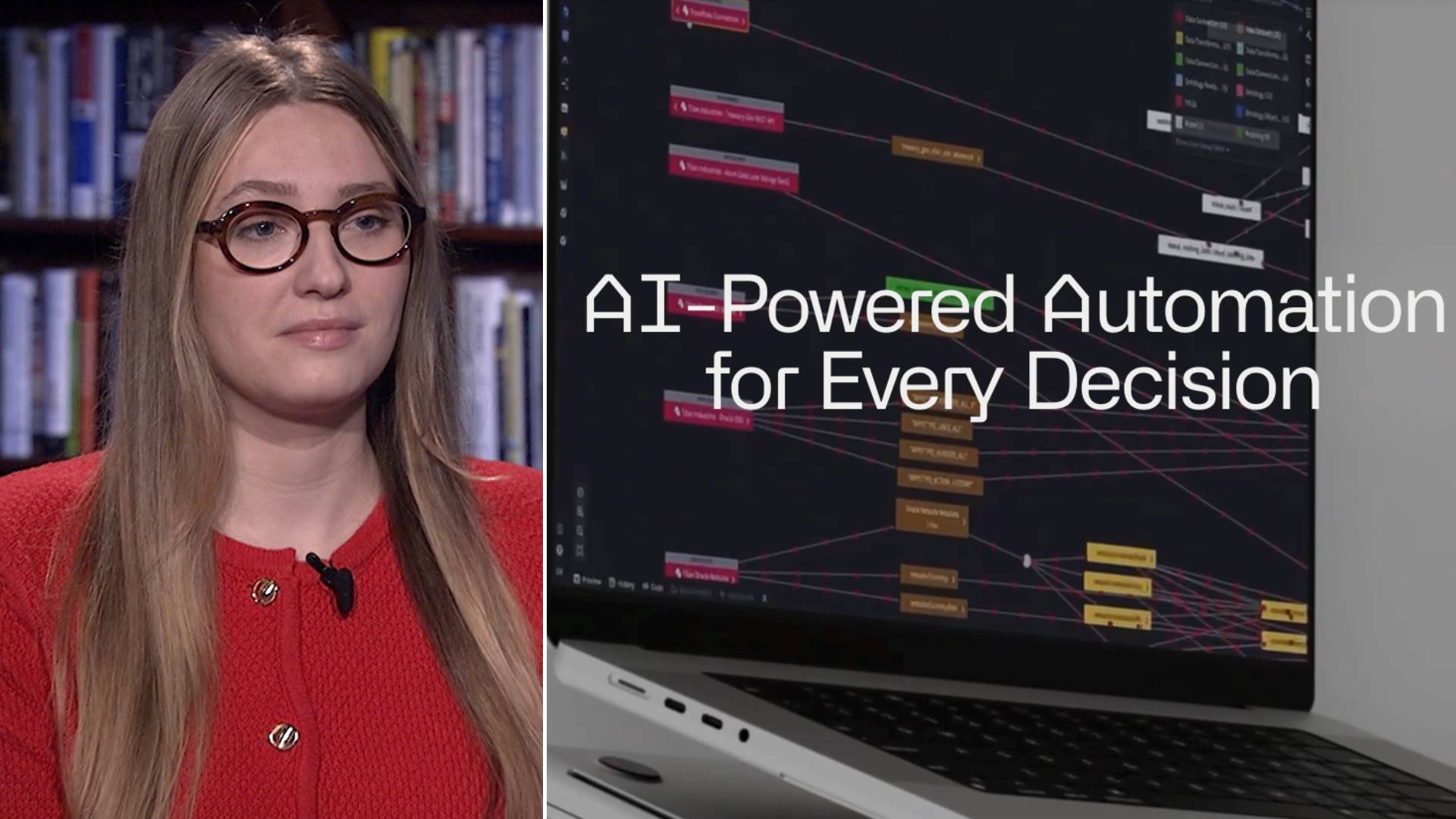

Multiple reports reveal that Palantir, an AI-driven contractor linked with Peter Thiel, has been tasked by the Trump administration to merge data from various federal agencies into digital IDs for Americans. This raises significant concerns over potential privacy violations and misuse, highlighting an emerging AI hazard.[AI generated]

)