The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

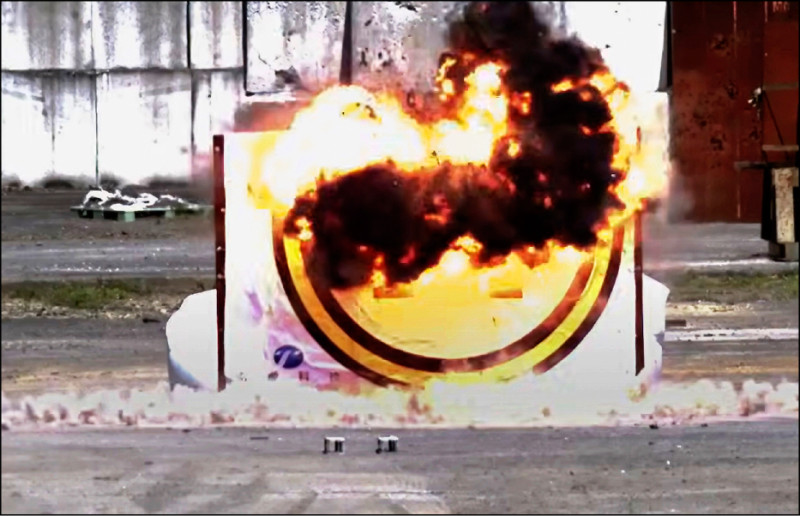

Multiple Taiwanese agencies and academic institutions have demonstrated AI-assisted attack drones in controlled tests. The systems showcased autonomous target recognition and precision strike capabilities, emphasizing both manual and programmed attack modes. Although all tests were successful, experts warn that AI-enabled weapons could pose hazards if misused or malfunction.[AI generated]