The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

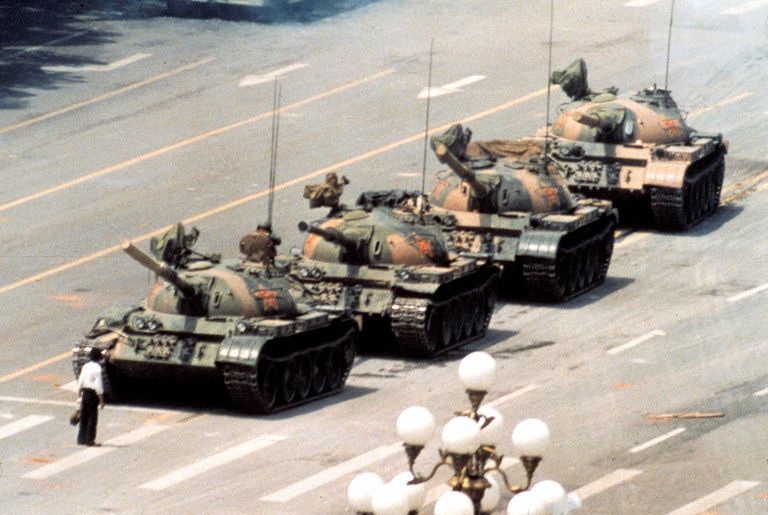

Leaked documents reveal that Chinese authorities are using advanced AI censorship tools to automatically remove any online references to the Tiananmen Square massacre. The system flags subtle visual metaphors – like a banana and four apples imitating the famous 'Tank Man' – erasing historical memory and violating free expression.[AI generated]