The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

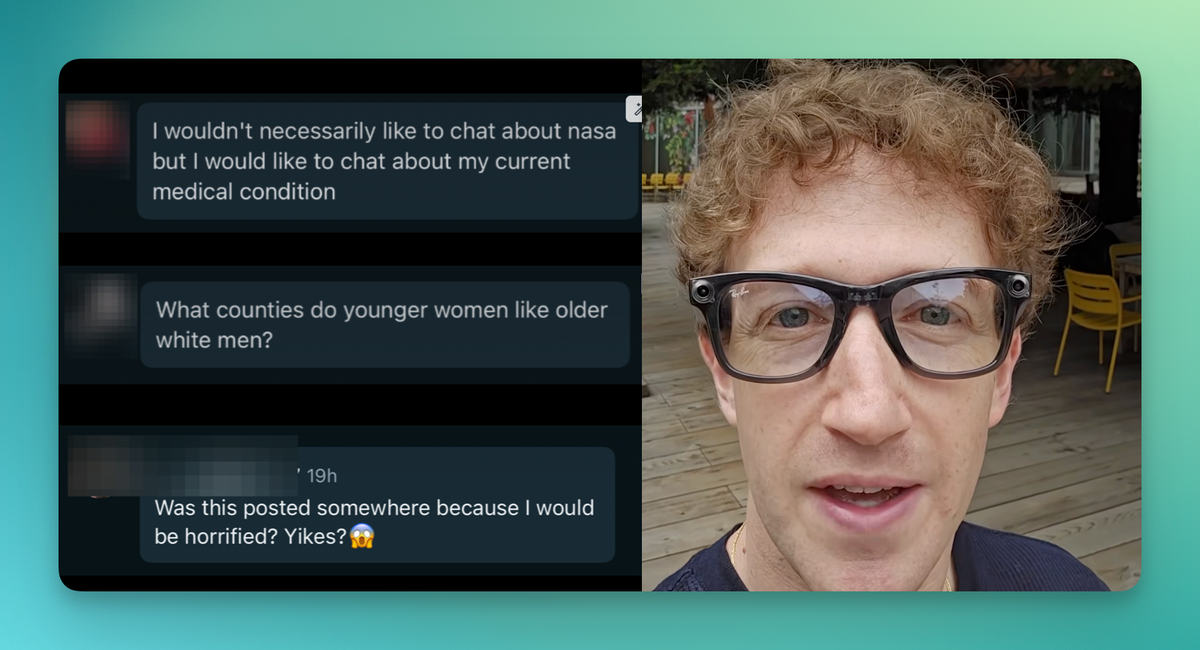

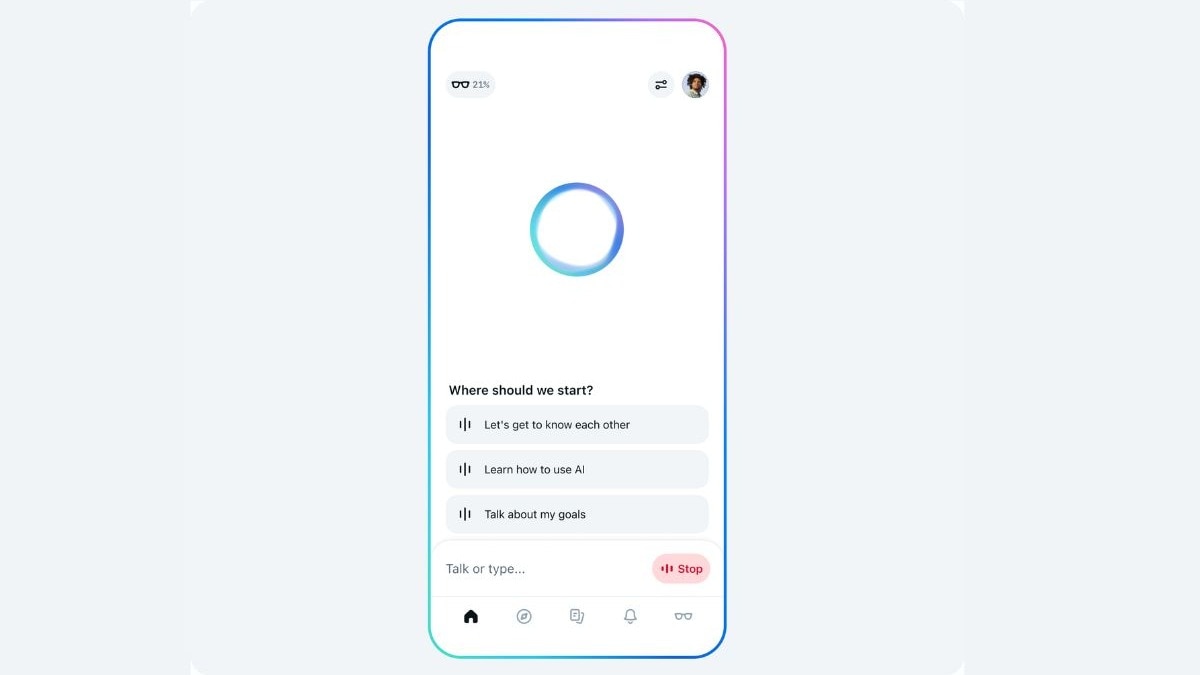

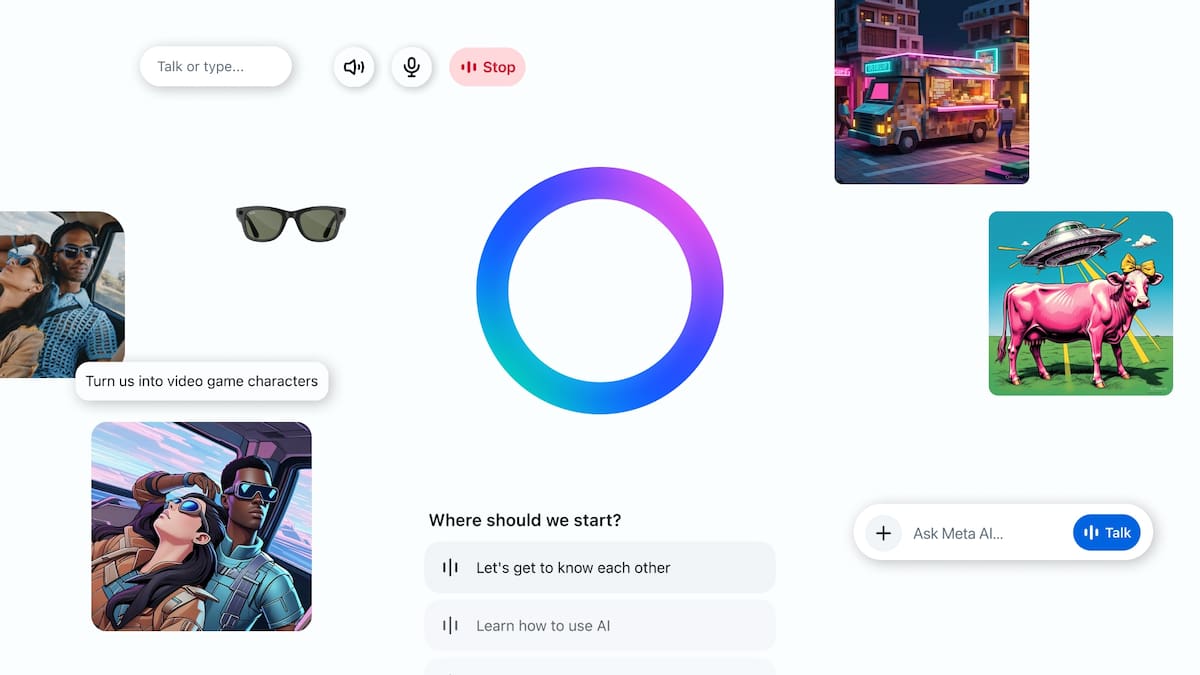

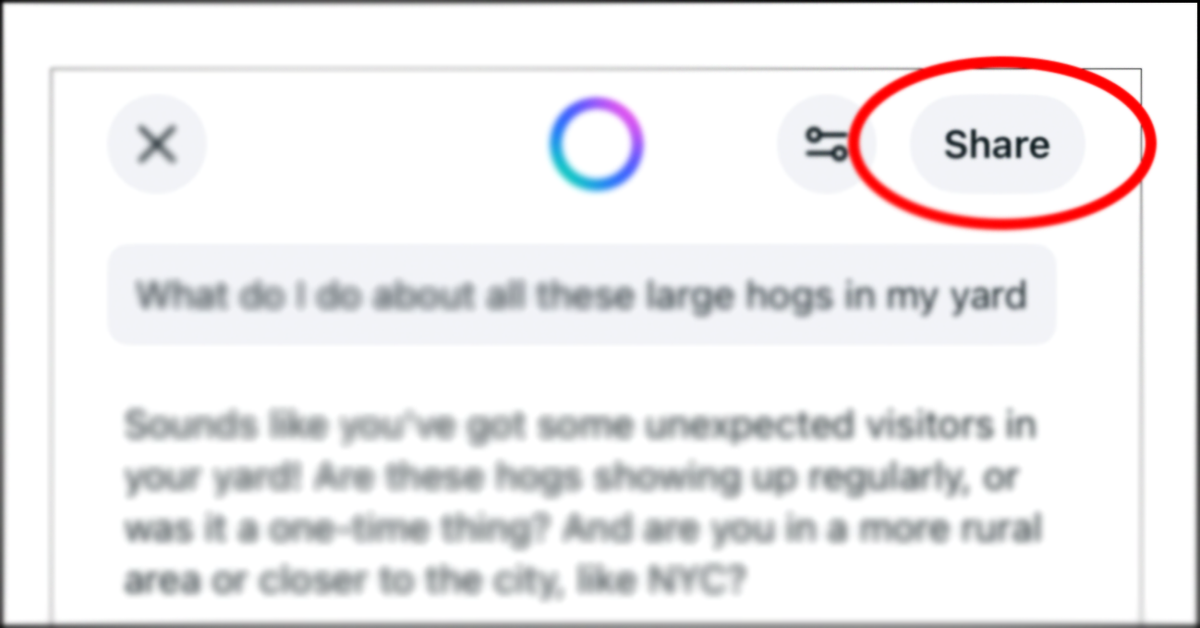

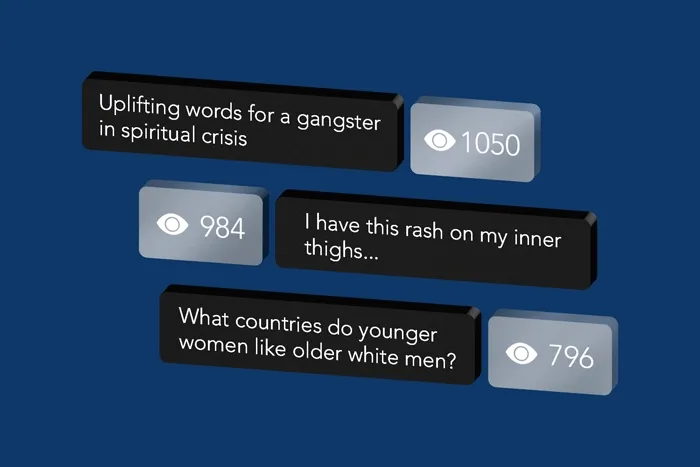

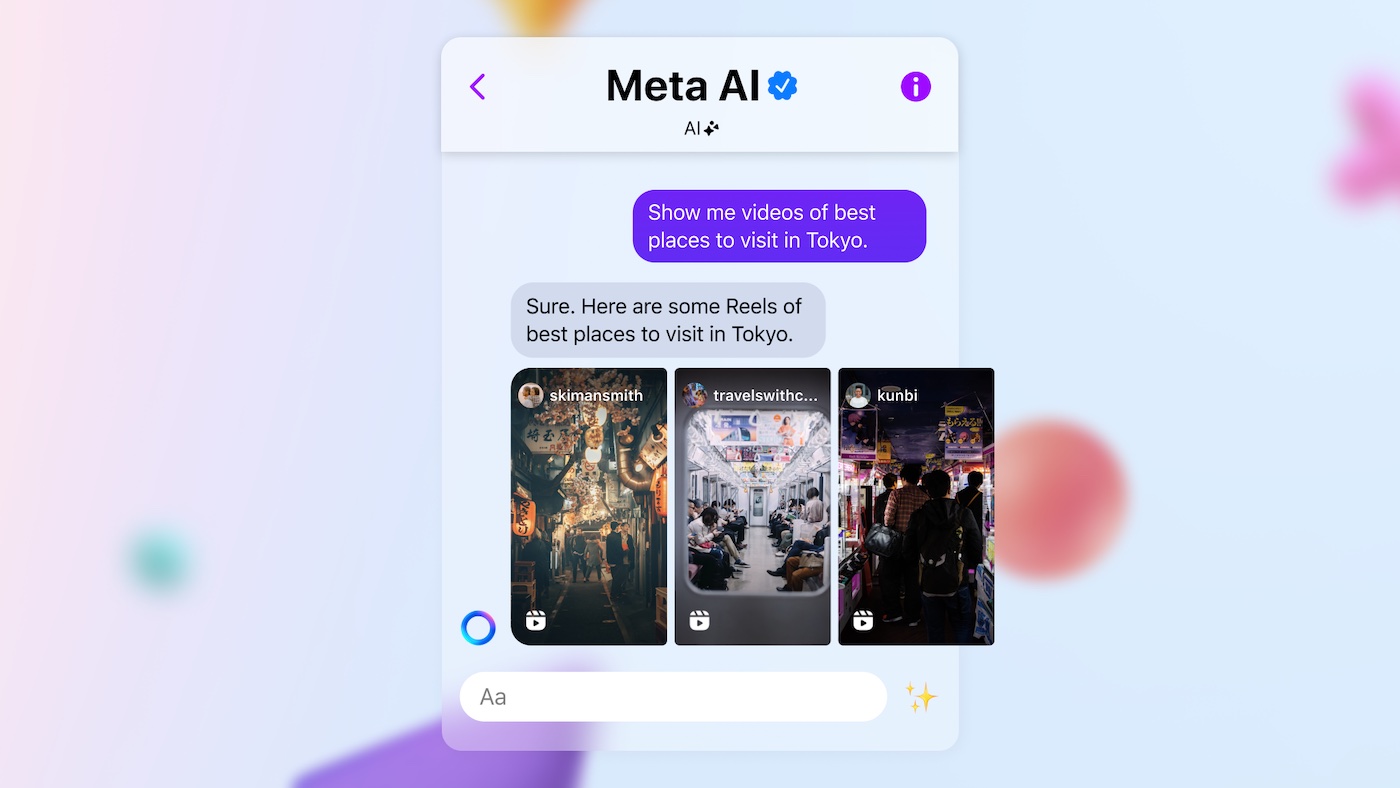

Meta's AI app features a public 'Discover' feed that displays user interactions, leading to unintentional sharing of sensitive personal information. Many users are unaware their posts are public, resulting in privacy violations and emotional distress, highlighting design flaws in user consent and data protection.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2024/P/B/jBgB4mR3OmrofirXNuZg/design-sem-nome-1-.png)

/https://www.html.it/app/uploads/2025/06/meta-ai-privacy.jpg)