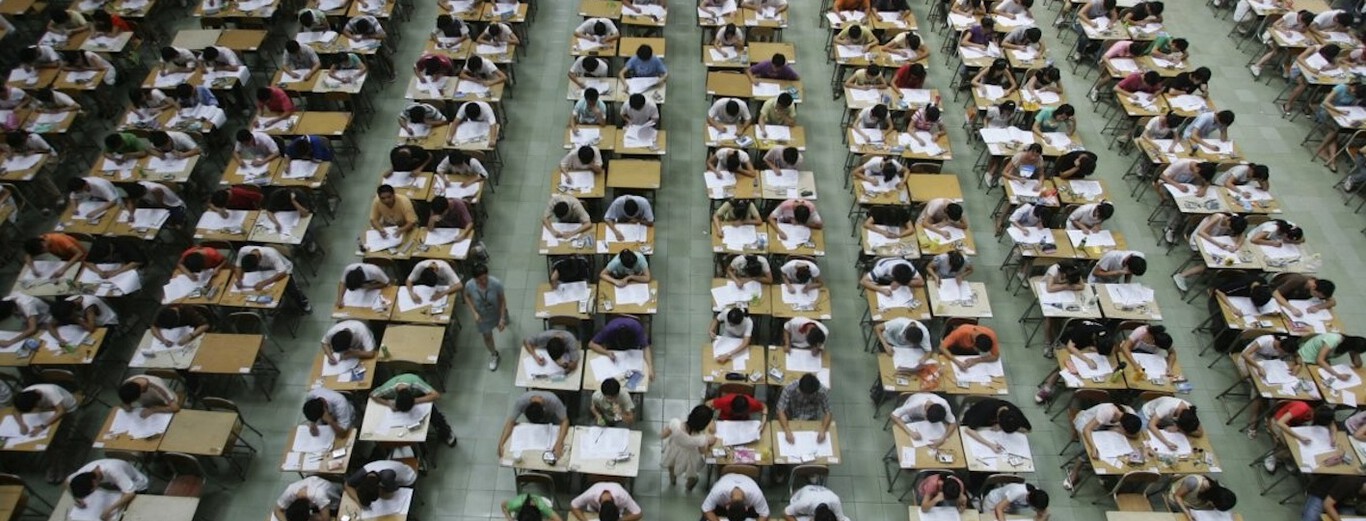

The article explicitly mentions AI systems capable of photo recognition and solving exam questions, which are disabled to prevent cheating during exams. Cheating via AI would constitute a violation of academic integrity and harm to the fairness of the exam process, which is a harm to communities and individuals' rights to fair assessment. However, no actual cheating incident or harm is reported; the article focuses on the potential for harm and the preventive disabling of AI features. This fits the definition of an AI Hazard, where AI use could plausibly lead to harm, and measures are taken to prevent it. There is no indication of realized harm or incident, so it is not an AI Incident. It is not merely complementary information because the main focus is on the potential harm and preventive action, not on responses or ecosystem updates. Therefore, AI Hazard is the appropriate classification.

/data/photo/2025/03/05/67c7b4230a68e.jpg)

/data/photo/2024/12/09/6756cea3d6e29.png)