The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

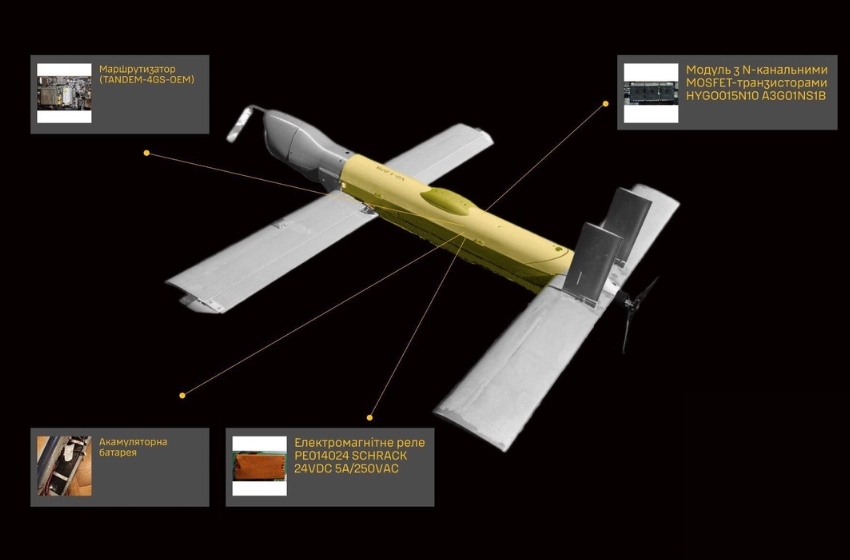

Ukrainian intelligence reports that Russia is deploying the V2U attack drone, which uses artificial intelligence for autonomous target search and selection, in the Sumy region. The drone relies on computer vision and NVIDIA Jetson Orin chips, enabling lethal autonomous operations and raising concerns about AI-driven harm in active conflict.[AI generated]

Why's our monitor labelling this an incident or hazard?

The V2U drone is an AI system as it uses AI-based targeting and image recognition software for autonomous target selection. Its deployment in active conflict and use by Russian forces to select targets directly leads to harm (injury or death) to persons, fulfilling the criteria for an AI Incident. The article details the AI system's use, not just potential or future harm, so it is not a hazard. It is not merely complementary information since the focus is on the AI system's operational use causing harm. Therefore, this event qualifies as an AI Incident.[AI generated]