The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

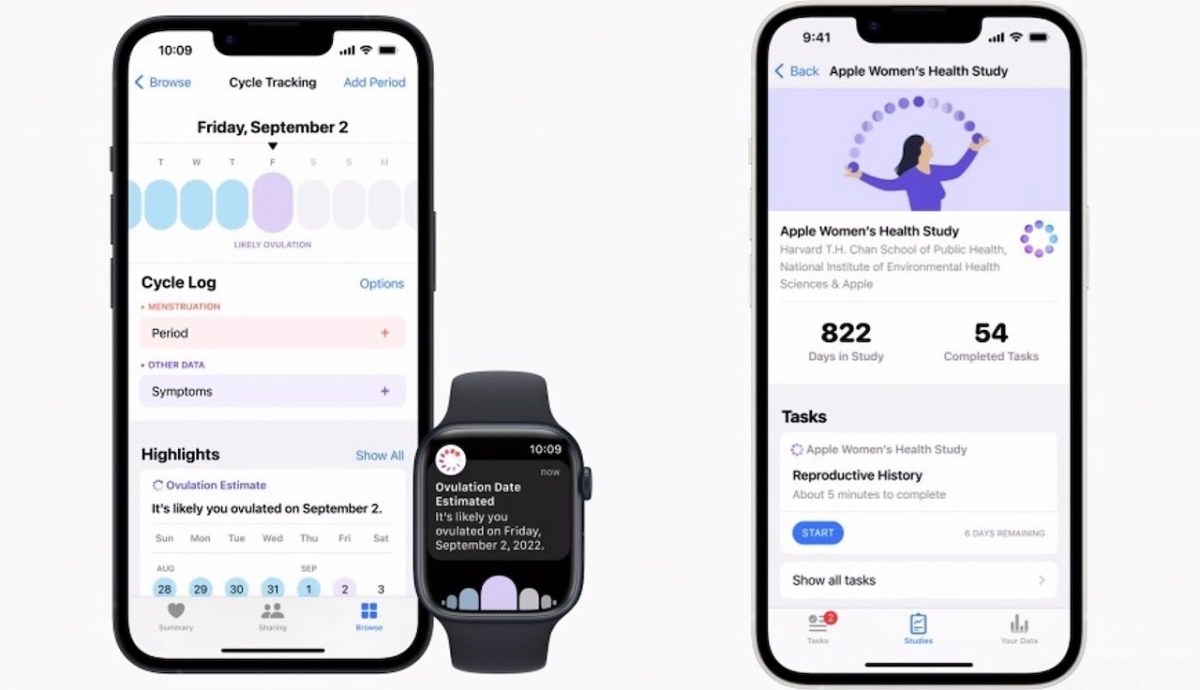

A University of Cambridge report reveals that period tracking apps, which use AI to process sensitive health data, are selling users' personal information at scale. This exposes women to privacy violations, discrimination in employment and healthcare, targeted advertising, and potential reproductive control, posing significant safety and human rights risks.[AI generated]

)