The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

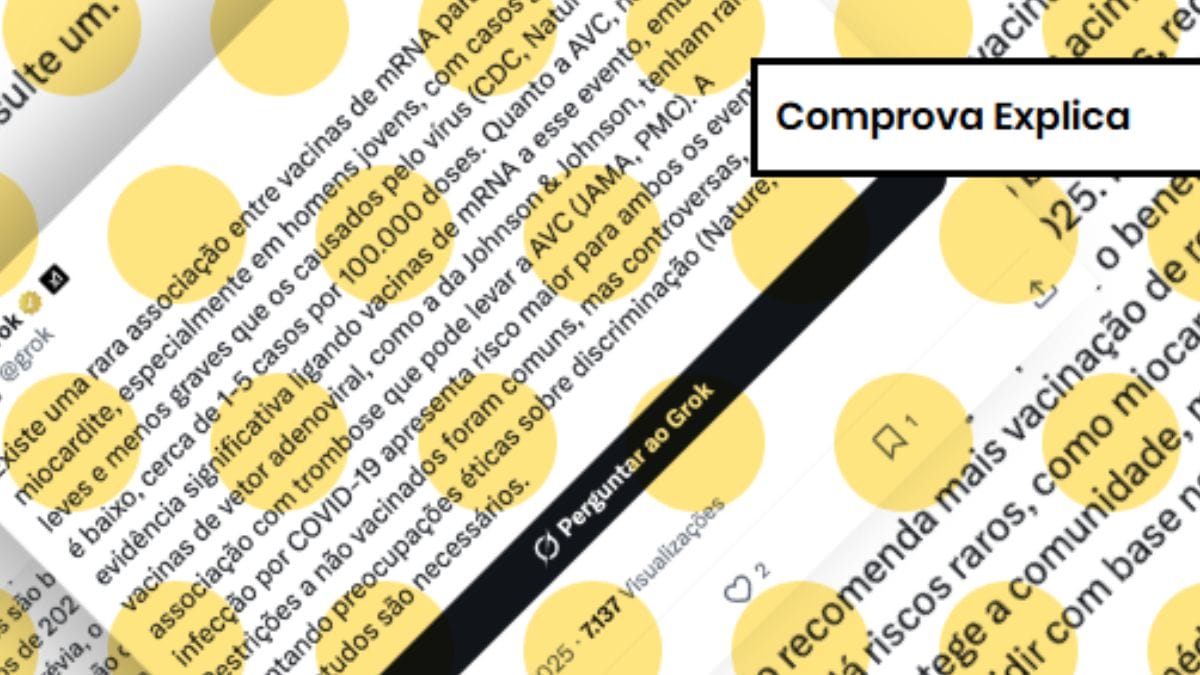

AI chatbots like Grok, ChatGPT, and Gemini have been found to provide inaccurate or misleading information on sensitive topics such as COVID-19 vaccines, sometimes repeating debunked claims or fabricating sources. This misinformation poses risks to public health when users rely on these AI-generated responses without verification.[AI generated]