The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

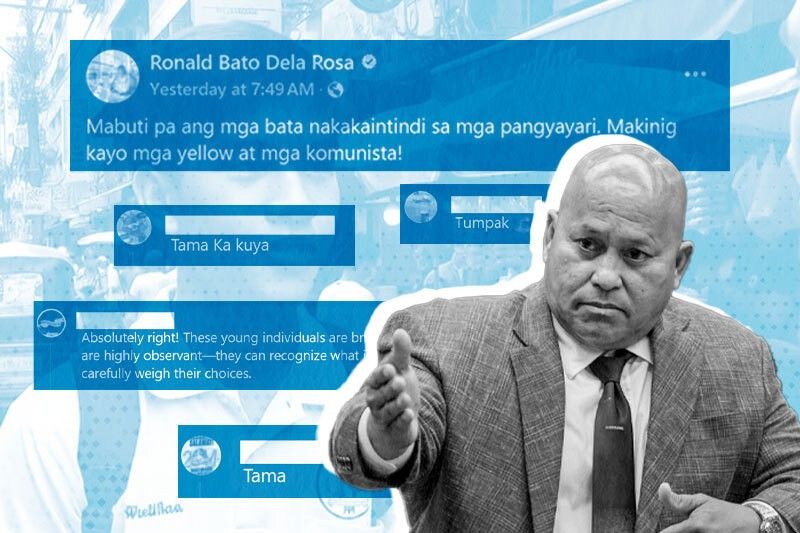

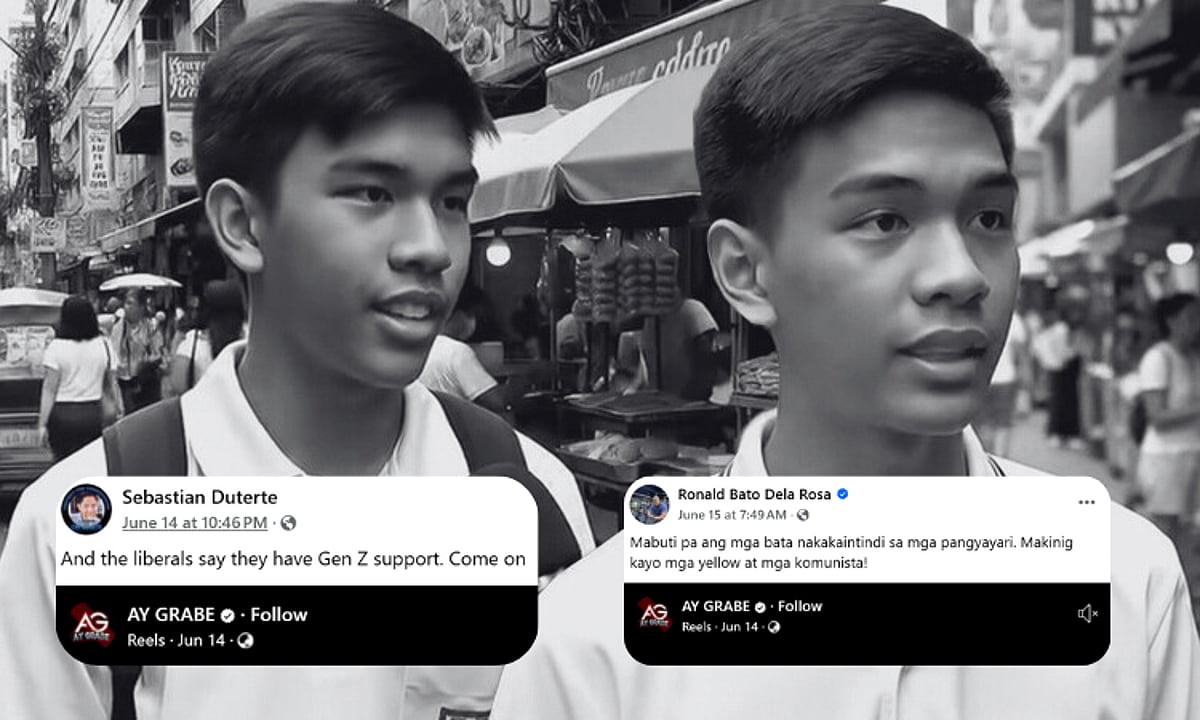

Philippine officials, including Senator Ronald dela Rosa and Davao City Mayor Sebastian Duterte, shared an AI-generated deepfake video featuring fake student interviews about Vice President Sara Duterte's impeachment. The incident sparked public concern and official condemnation, highlighting how AI-generated disinformation by leaders undermines public trust and spreads misinformation.[AI generated]