The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

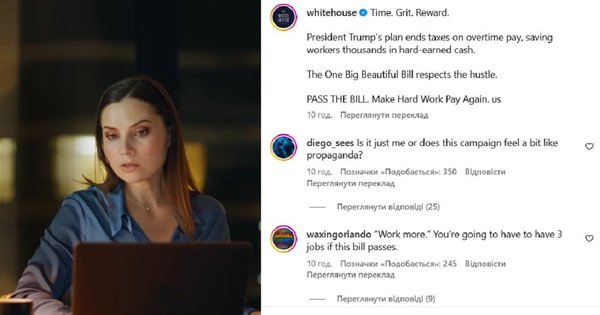

The White House published an Instagram video promoting a tax bill, featuring an AI-generated likeness of Ukrainian actress Antonina Khizhnyak without her consent. The deepfake, likely created using publicly available images, raises concerns about unauthorized use of personal likenesses in political communication and potential violations of personal and intellectual property rights.[AI generated]