The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

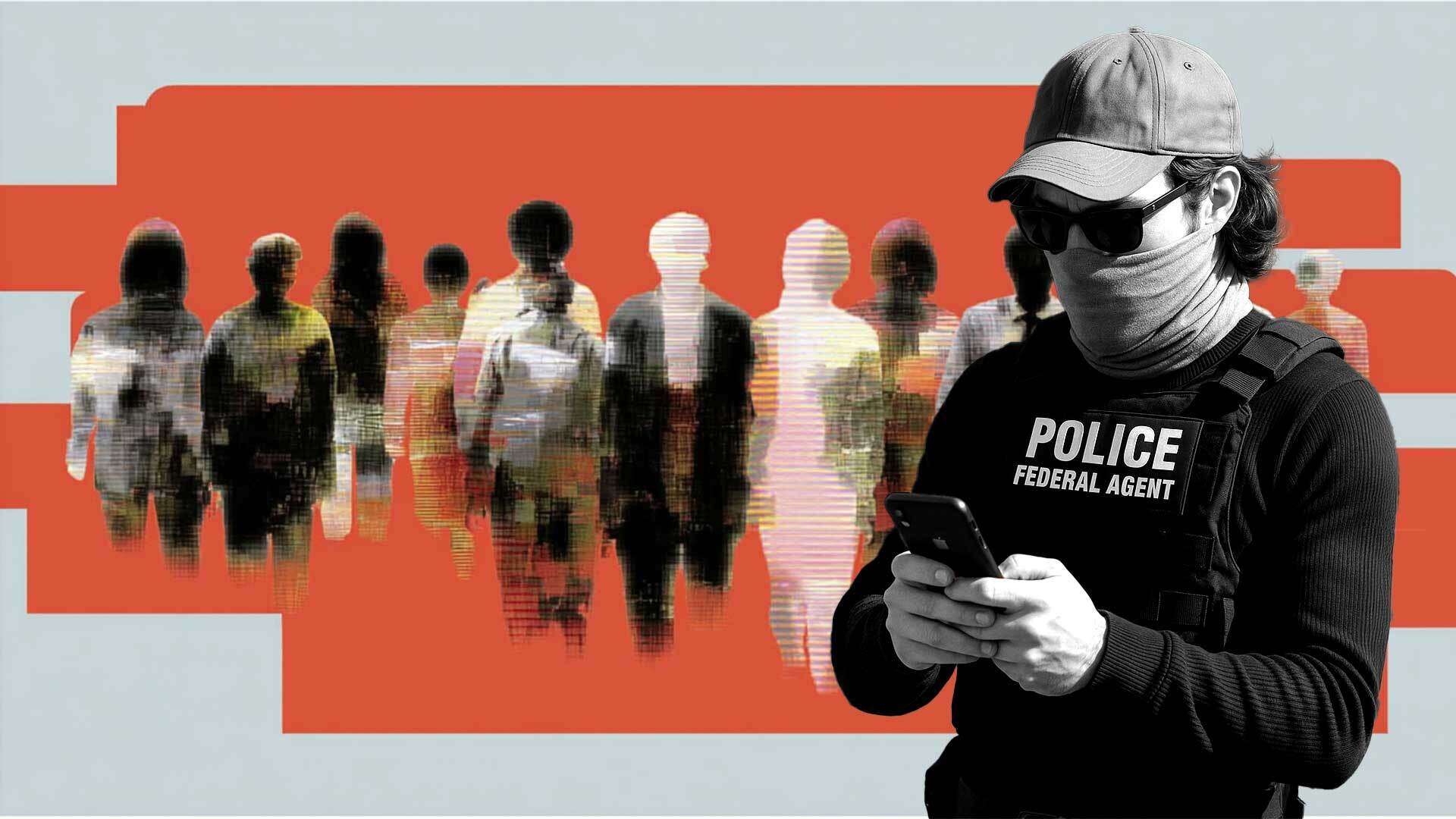

U.S. Immigration and Customs Enforcement (ICE) has deployed the Mobile Fortify app, which uses AI-driven facial recognition and fingerprint biometrics to identify individuals in real time. Originally intended for border use, the technology is now used domestically, raising concerns over privacy violations, wrongful arrests, and human rights abuses due to unreliable matches and lack of oversight.[AI generated]