The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

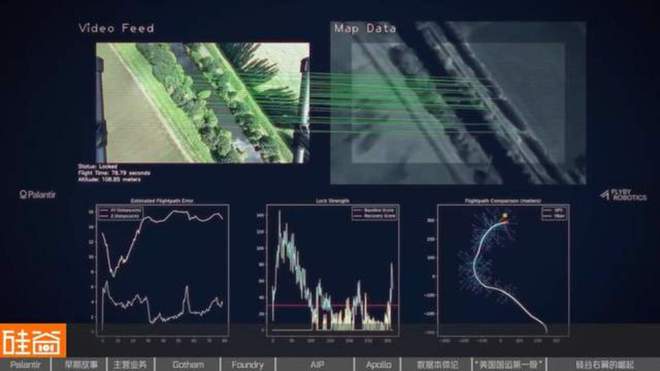

Palantir developed AI systems that have been deployed in military operations, including autonomous identification and targeting of enemy assets, notably in the Ukraine conflict. These AI-driven tools have directly contributed to physical harm and military disruption, marking a significant instance of AI involvement in warfare.[AI generated]