The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

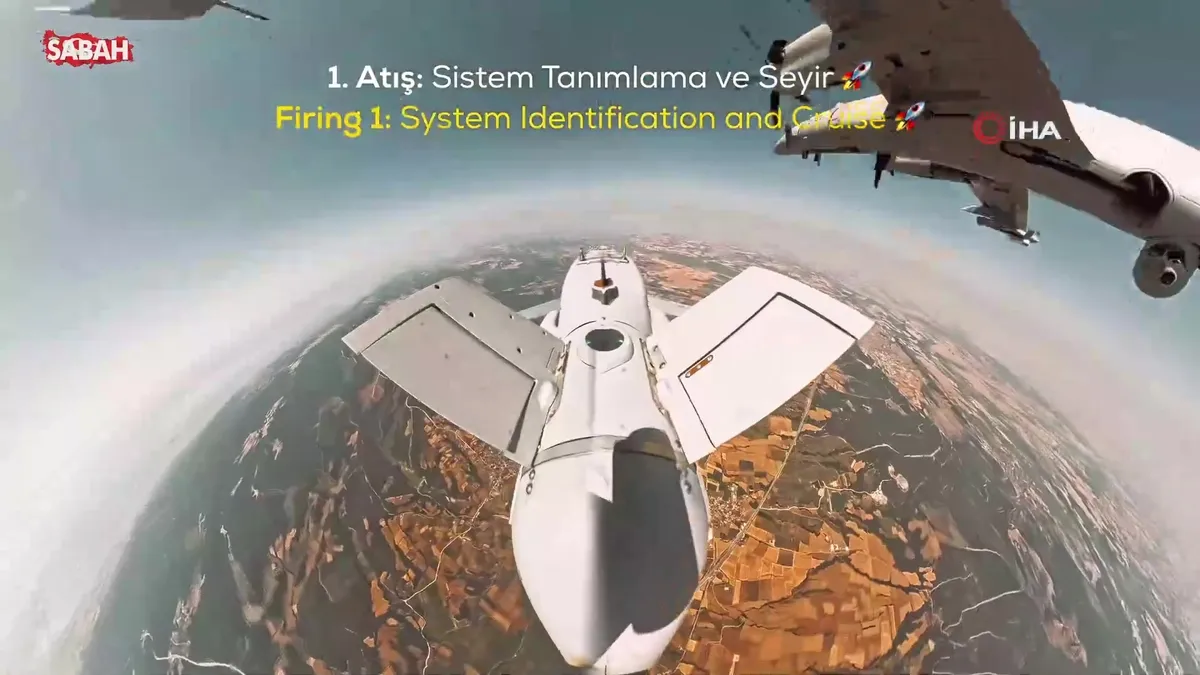

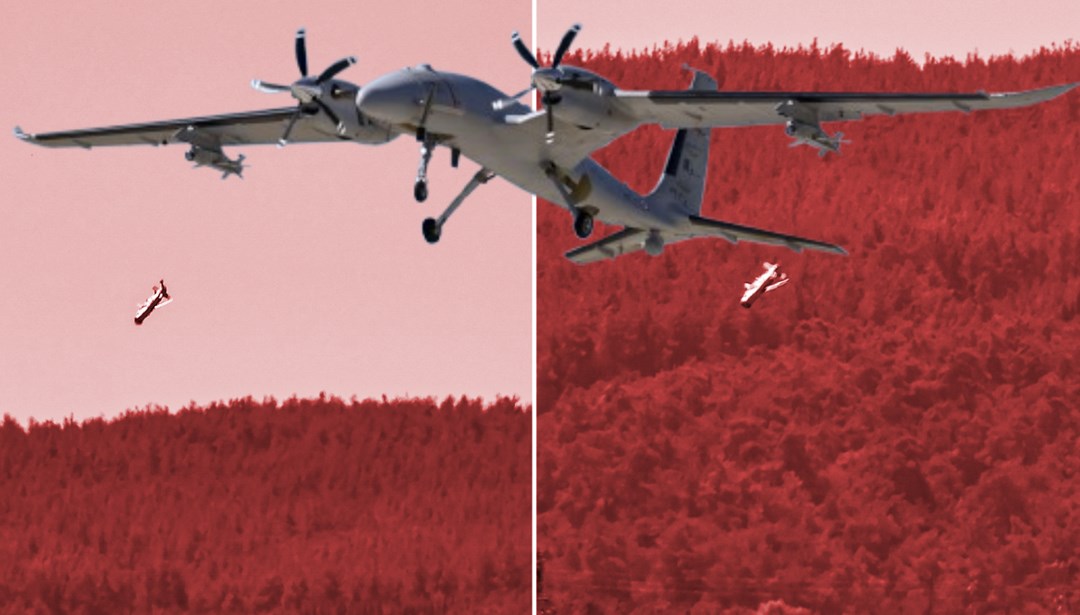

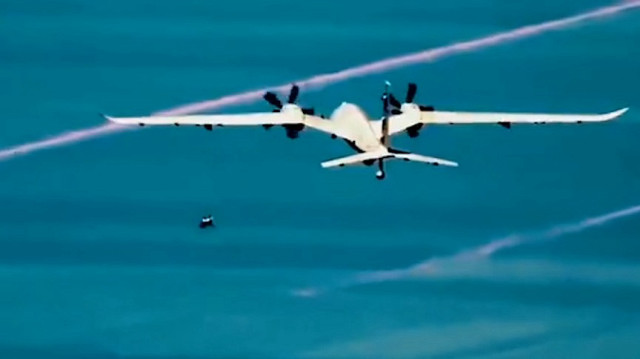

Baykar's AI-powered KEMANKEŞ 1 mini cruise missile, integrated with the Bayraktar AKINCI drone, successfully destroyed aerial targets in recent tests. The AI system autonomously identified and struck moving targets with high precision, demonstrating the operational capability and potential risks of lethal autonomous weapon systems.[AI generated]