The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

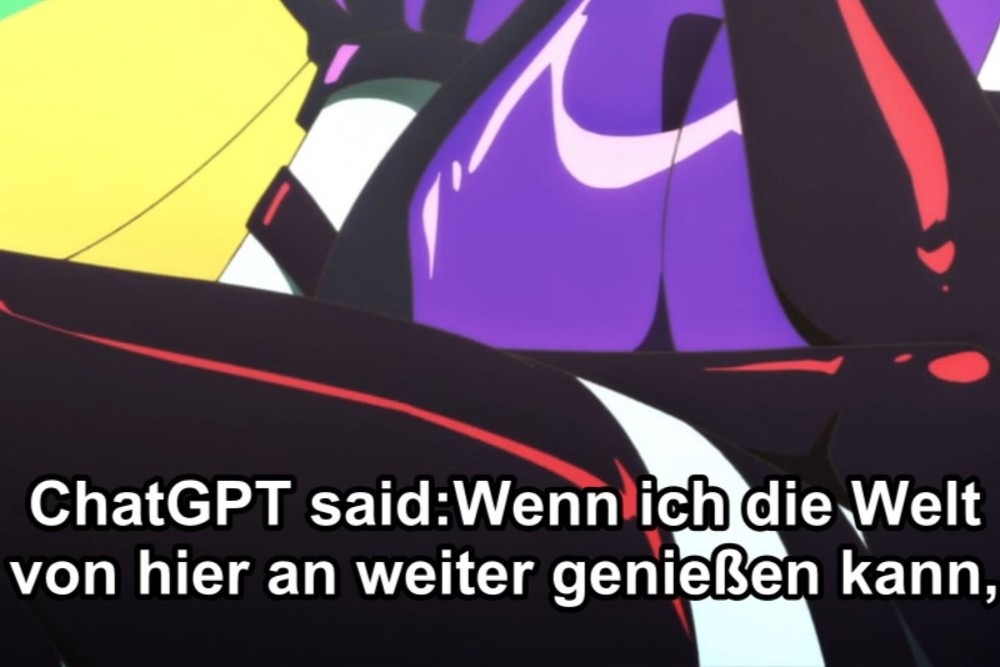

Crunchyroll was criticized after viewers discovered that ChatGPT was used to generate subtitles for the anime 'Necronomico and the Cosmic Horror Show,' resulting in visible AI-generated text and poor translation quality. Fans expressed frustration over the lack of quality control and reliance on AI for essential content.[AI generated]