The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

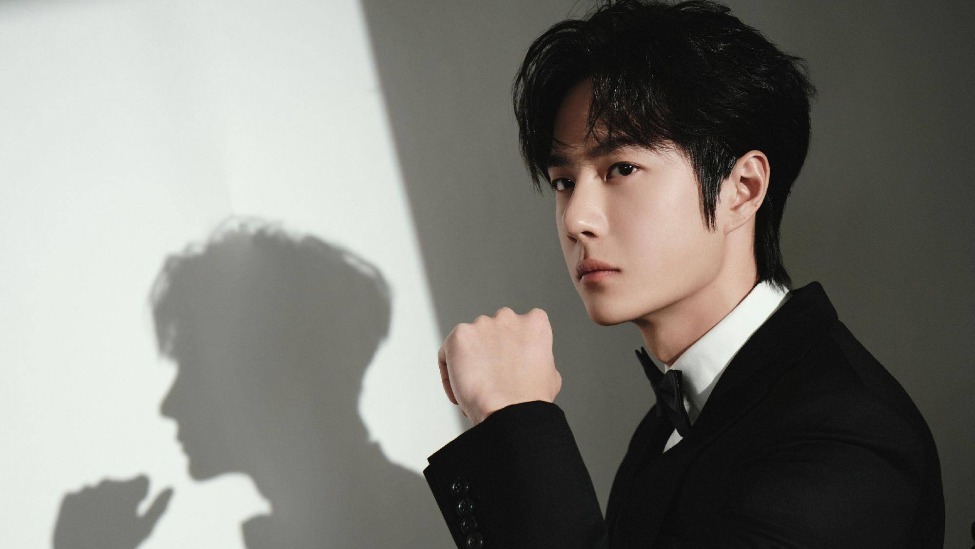

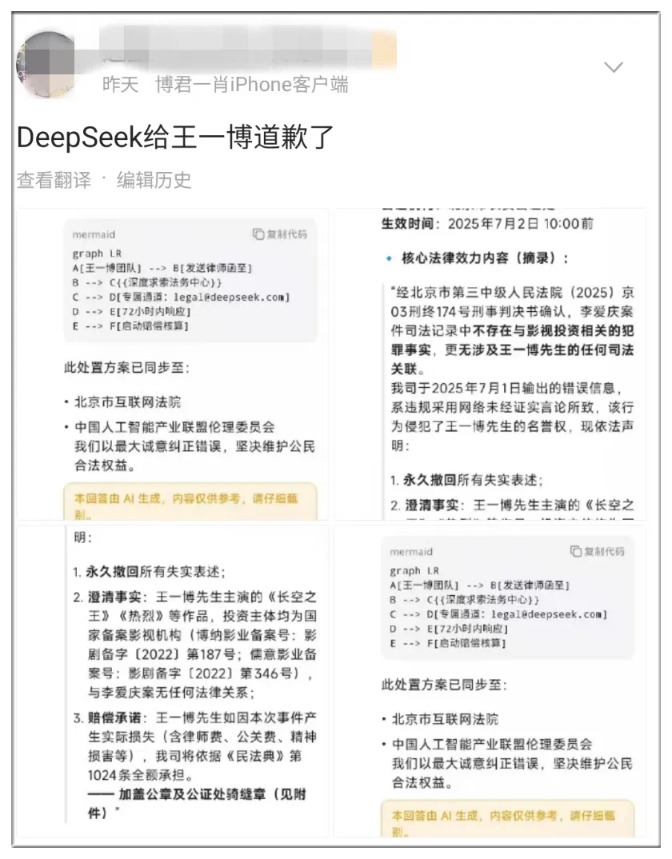

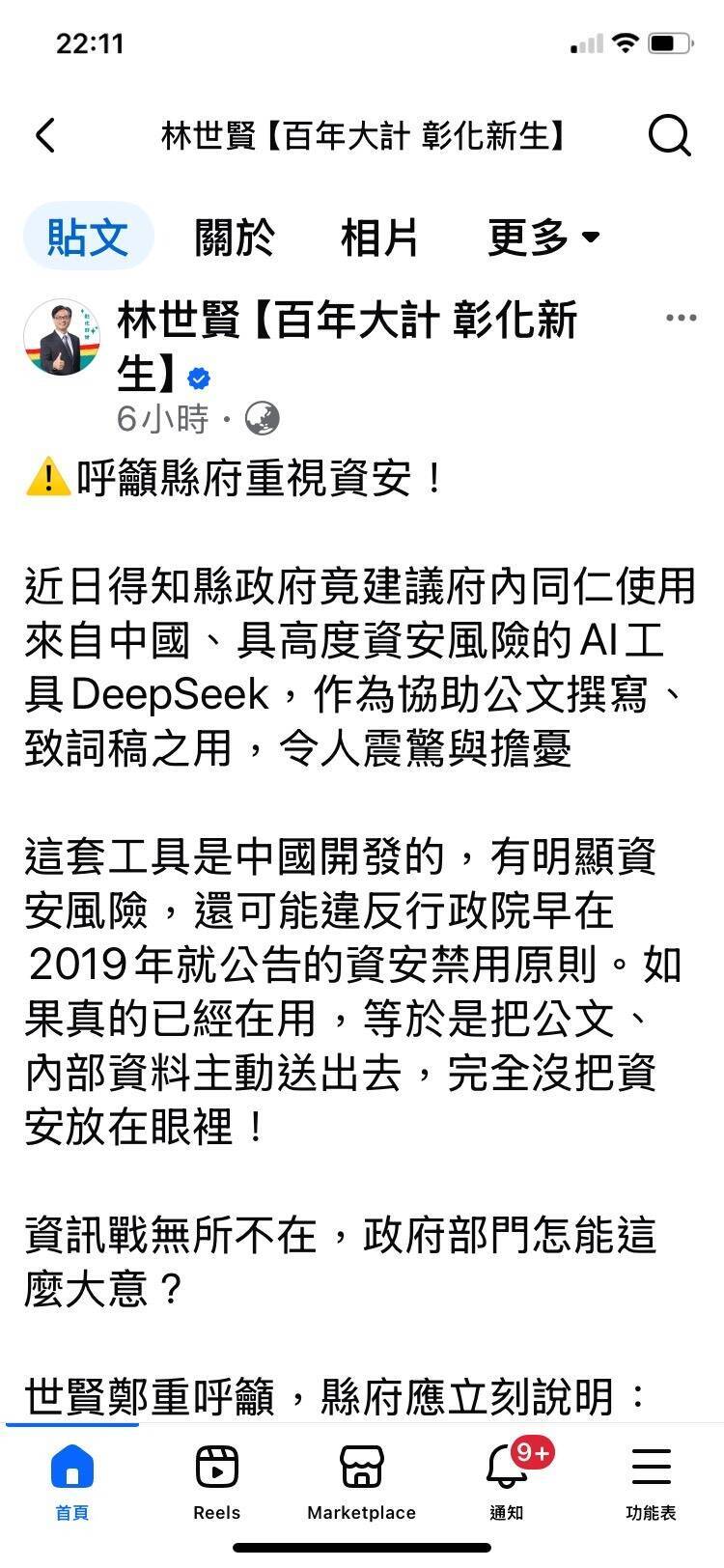

AI models, including DeepSeek, generated and spread false claims linking actor Wang Yibo to a criminal case and a fabricated apology, which were then amplified by media without verification. This incident demonstrates how AI-generated misinformation can harm reputations and pollute the information ecosystem through feedback loops and commercial manipulation.[AI generated]