The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

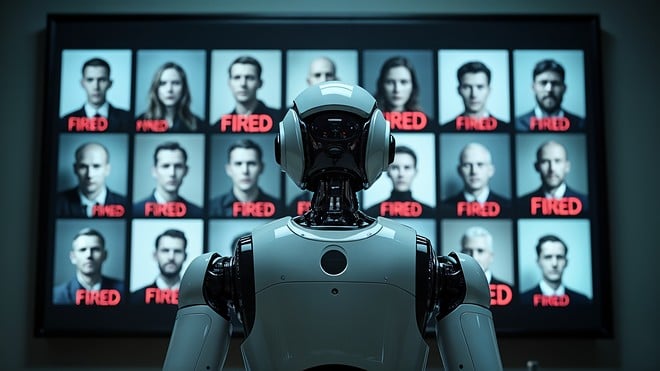

Microsoft reported saving over $500 million through AI tools like Copilot, particularly in call centers, and enforced internal AI adoption. These automation gains directly contributed to the layoff of 15,000 employees in 2025, raising concerns about the human cost of large-scale AI-driven workforce reductions.[AI generated]