The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

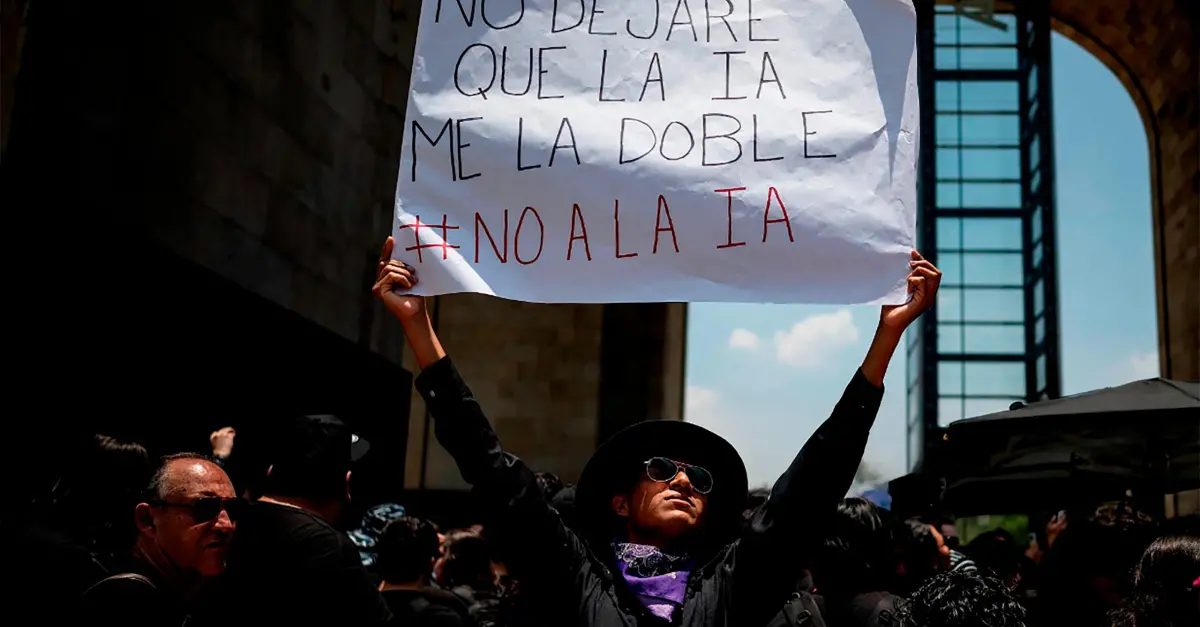

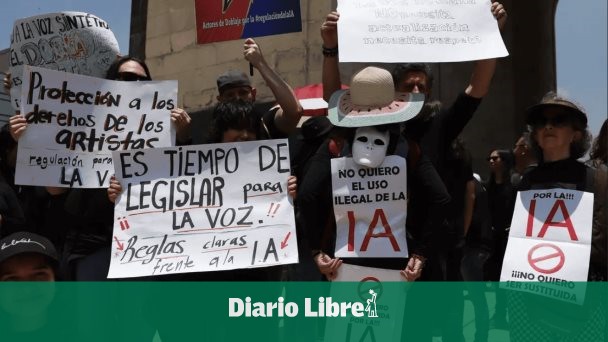

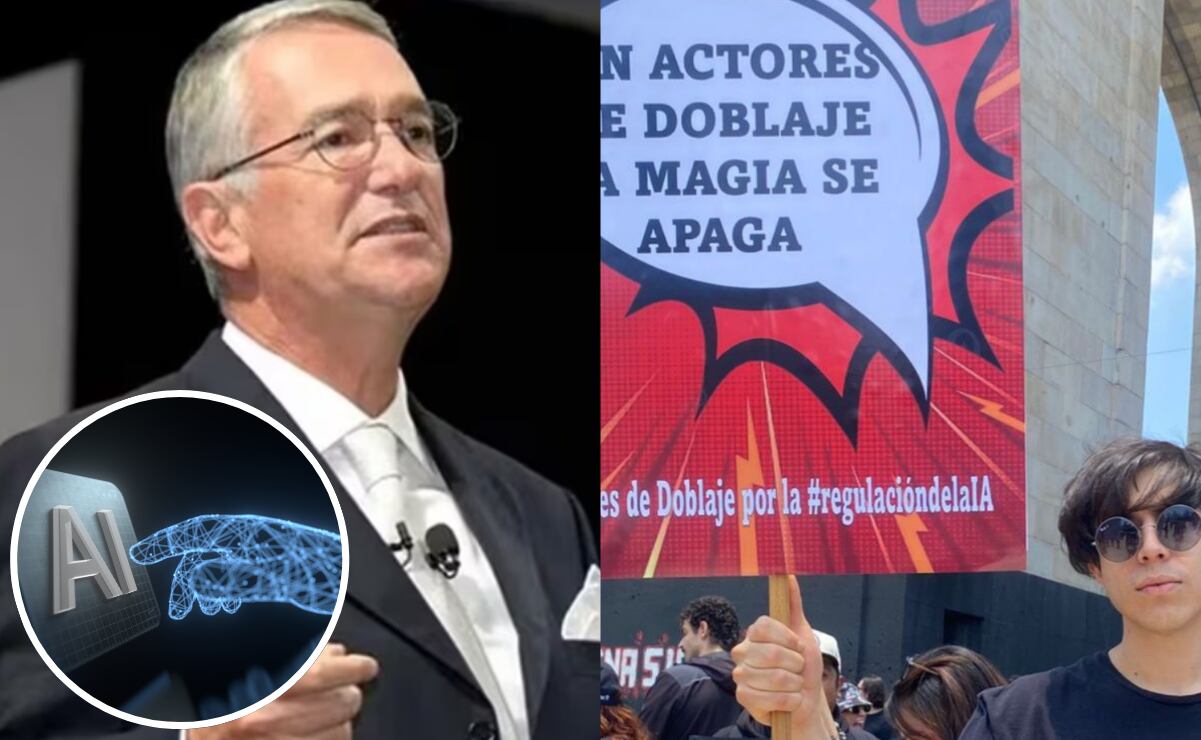

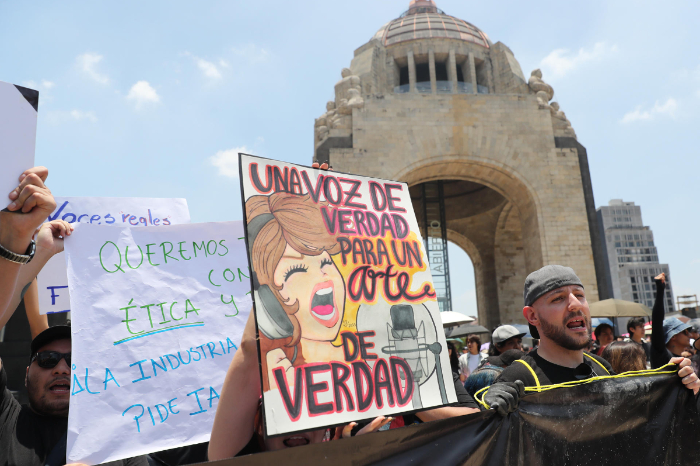

Mexican voice actors and artists protested in Mexico City after the National Electoral Institute (INE) used AI to clone the voice of deceased actor Pepe Lavat without family consent. The group demands legislation to protect their voices from unauthorized AI cloning, citing economic and intellectual property harms.[AI generated]