The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

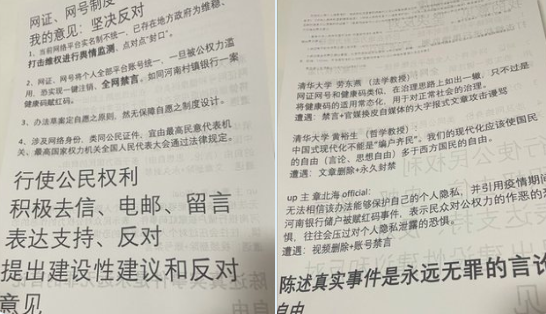

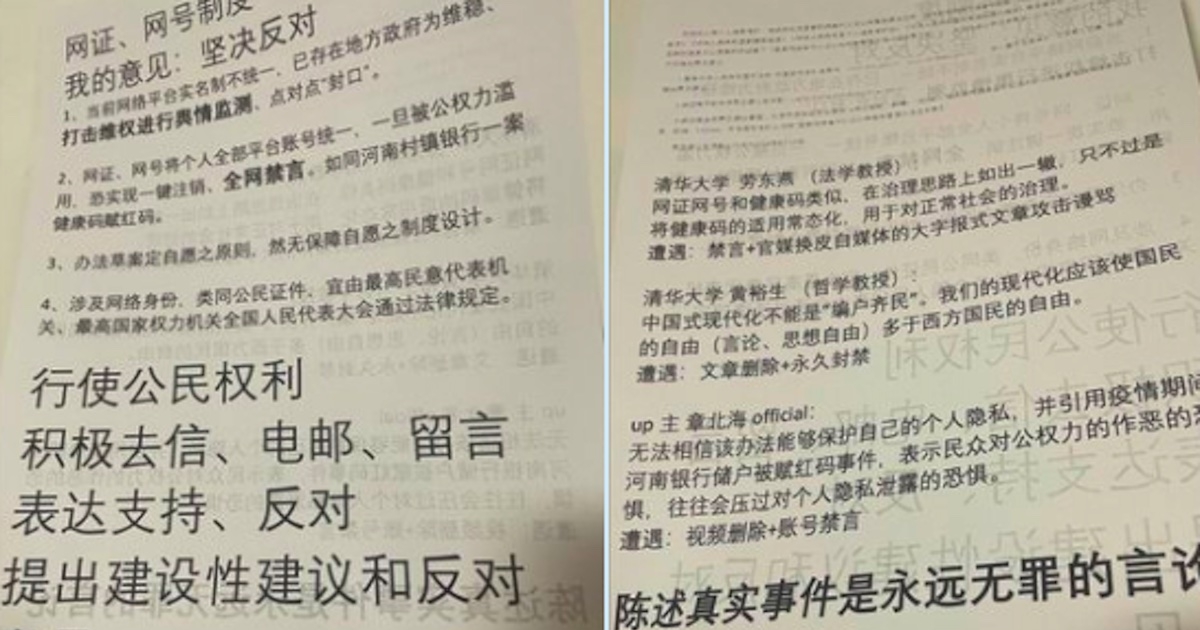

China has launched a national digital identity system integrating AI and big data for real-name online registration and surveillance, raising widespread concerns over censorship, privacy violations, and repression. Simultaneously, authorities intensified AI-driven campaigns to police online content affecting minors, addressing ongoing harms such as exploitation and mental health risks.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the deployment of a national digital identity authentication system that integrates with AI and big data technologies for monitoring and controlling online behavior. While the article does not report direct harm yet, it highlights credible concerns about the system's potential to enable large-scale surveillance, censorship, and repression, which are violations of human rights and harm to communities. The AI system's use in predictive policing and behavior scoring is a plausible future risk. Hence, this is an AI Hazard rather than an AI Incident, as harm is not yet realized but is foreseeable and credible.[AI generated]