The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

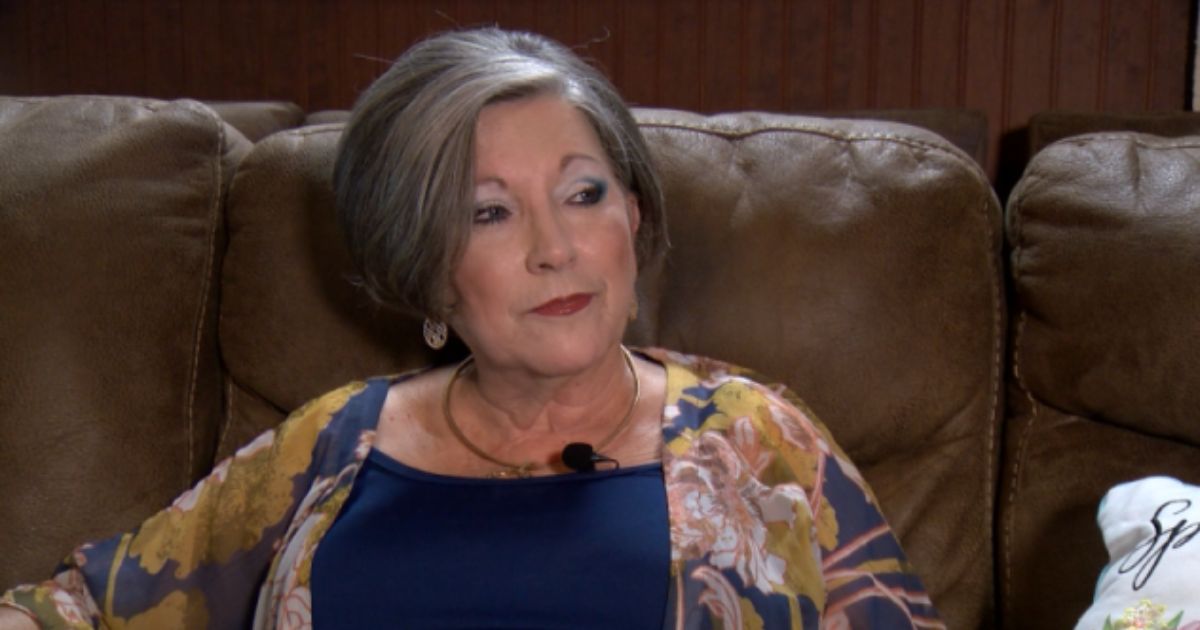

Scammers used AI to clone a Florida woman's daughter's voice, convincing her that her daughter was in legal trouble and needed $15,000 for bail. The realistic AI-generated voice led the victim to transfer the money, resulting in significant financial and emotional harm.[AI generated]

:max_bytes(150000):strip_icc():focal(749x0:751x2)/person-talking-into-a-phone-072025-1-dc0233d7d13d4ff3ba034f470dae62fd.jpg)