The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

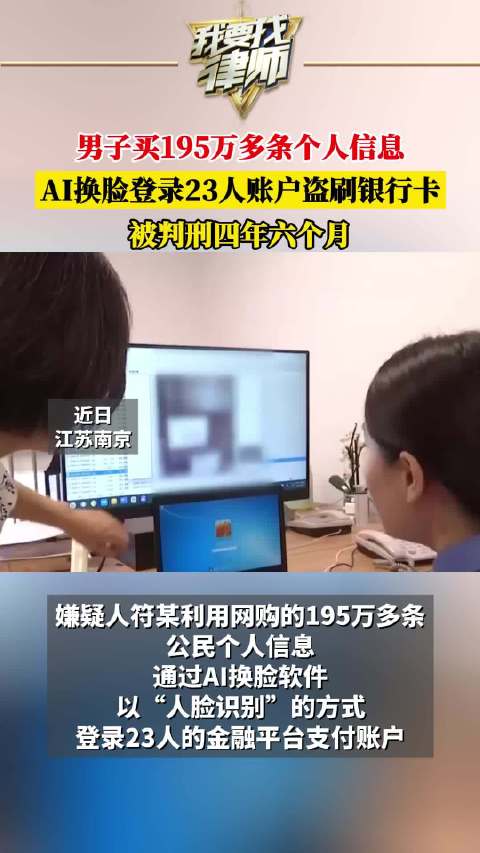

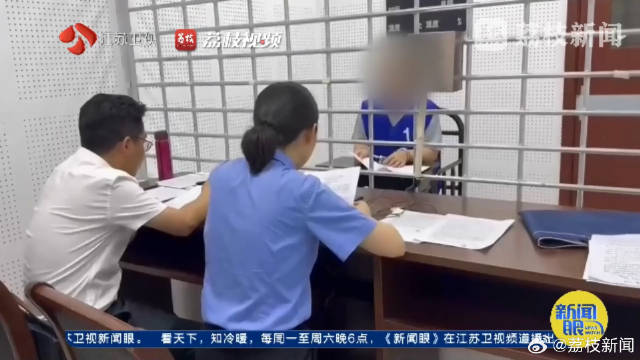

A man in Nanjing illegally purchased 1.95 million citizens' personal data and used AI face-swapping software to bypass facial recognition on a financial platform, accessing 23 victims' accounts and stealing 15,996 yuan. He was sentenced to 4.5 years in prison, highlighting security risks in AI-driven authentication systems.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves an AI system (AI face-swapping software) used to defeat facial recognition security, leading to unauthorized access to victims' financial accounts and financial losses. This constitutes direct harm to persons and violations of their rights. The case was prosecuted and resulted in criminal penalties, confirming the harm occurred. The AI system's use was central to the incident, fulfilling the criteria for an AI Incident. The article also discusses responses and improvements, but the primary focus is on the realized harm caused by the AI misuse.[AI generated]