The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

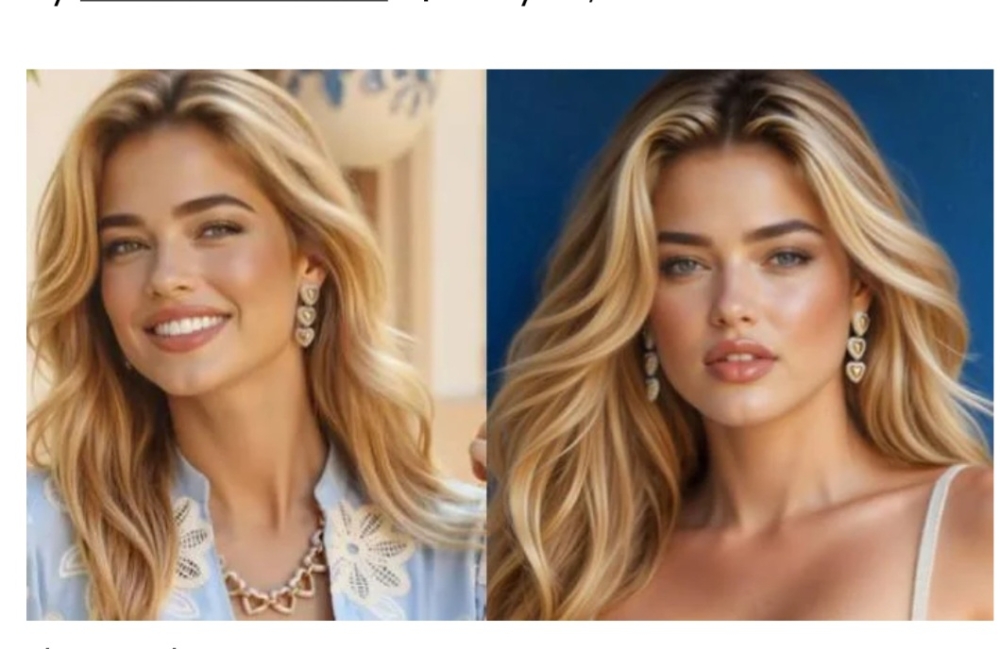

Vogue's August 2025 issue featured AI-generated models, prompting widespread criticism, subscription cancellations, and protests from industry professionals. The use of AI models has led to economic harm for human creatives and sparked debates about authenticity, artistry, and the future of employment in fashion.[AI generated]