The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

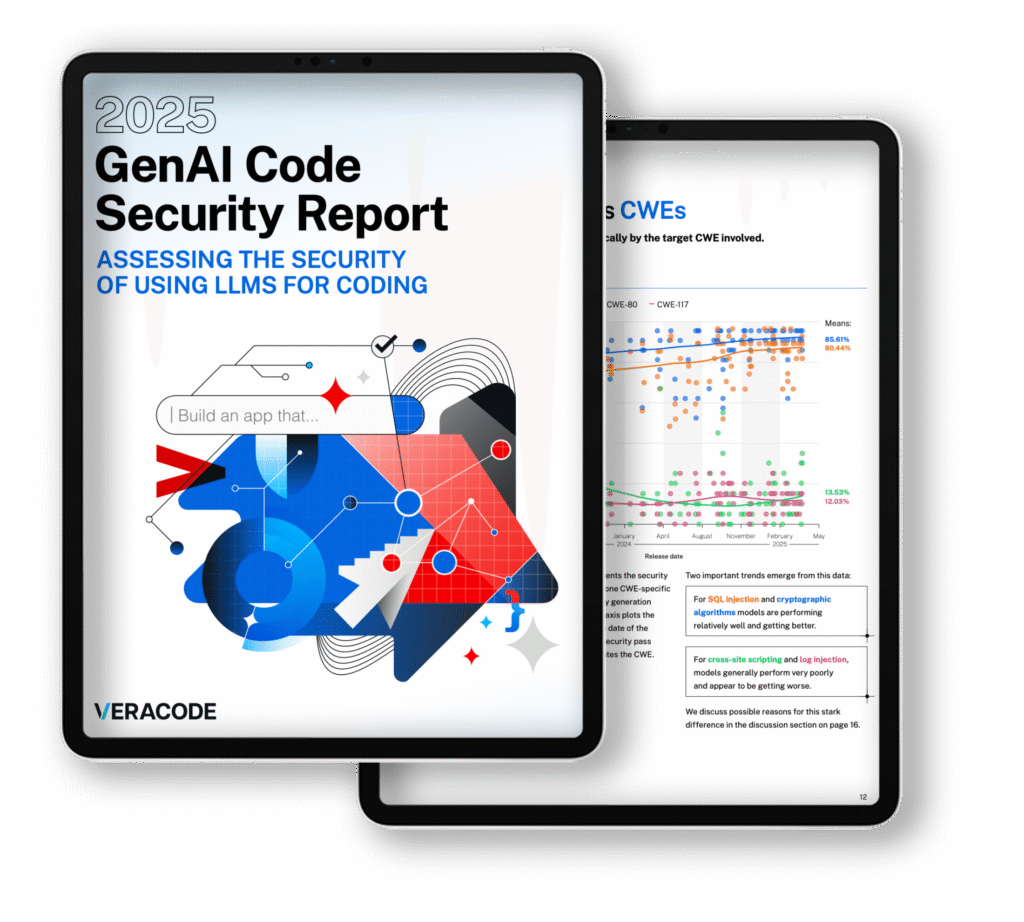

A Veracode study of over 100 large language models revealed that 45% of AI-generated code contains known security vulnerabilities, including serious flaws like SQL injection and cross-site scripting. This widespread issue poses significant cybersecurity risks as developers increasingly rely on AI for software development.[AI generated]

.webp?height=418&t=1753897933&width=800)