The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

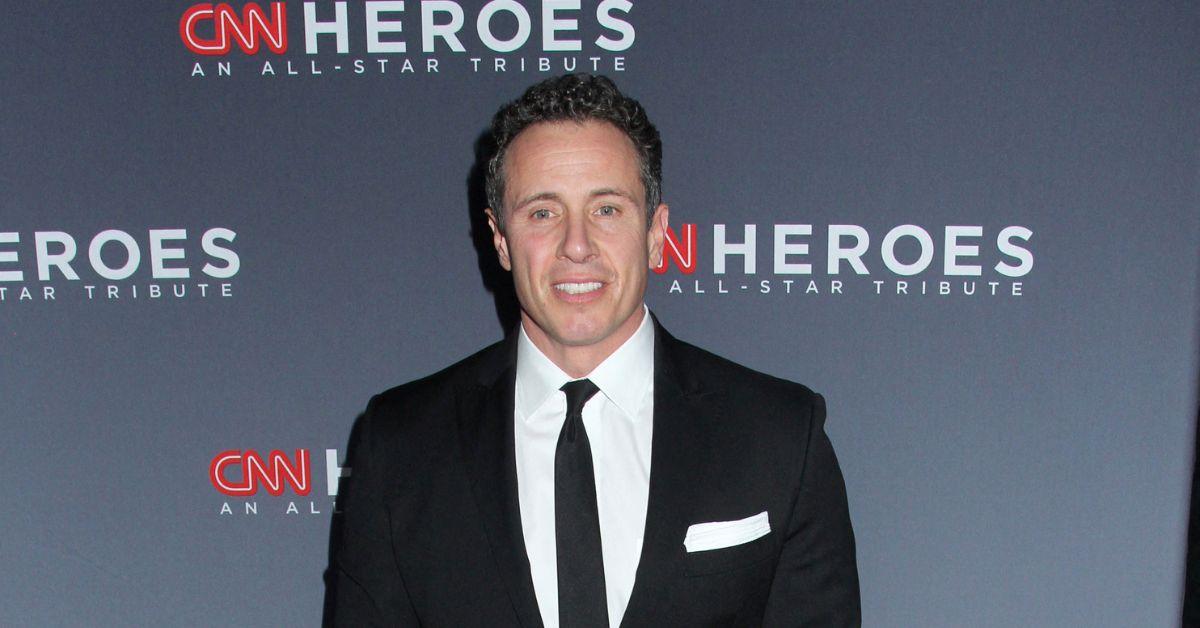

NewsNation host Chris Cuomo mistakenly shared an AI-generated deepfake video of Rep. Alexandria Ocasio-Cortez making inflammatory remarks, believing it to be real. The incident sparked social media backlash and highlighted the risks of AI-generated misinformation and reputational harm caused by deepfakes.[AI generated]