The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

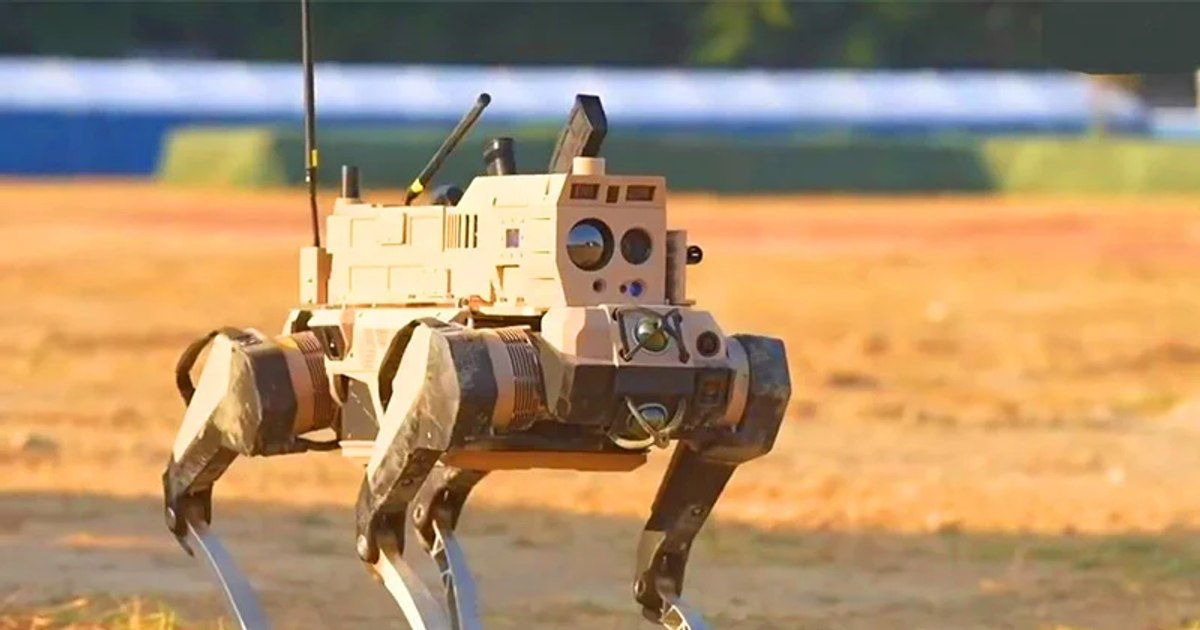

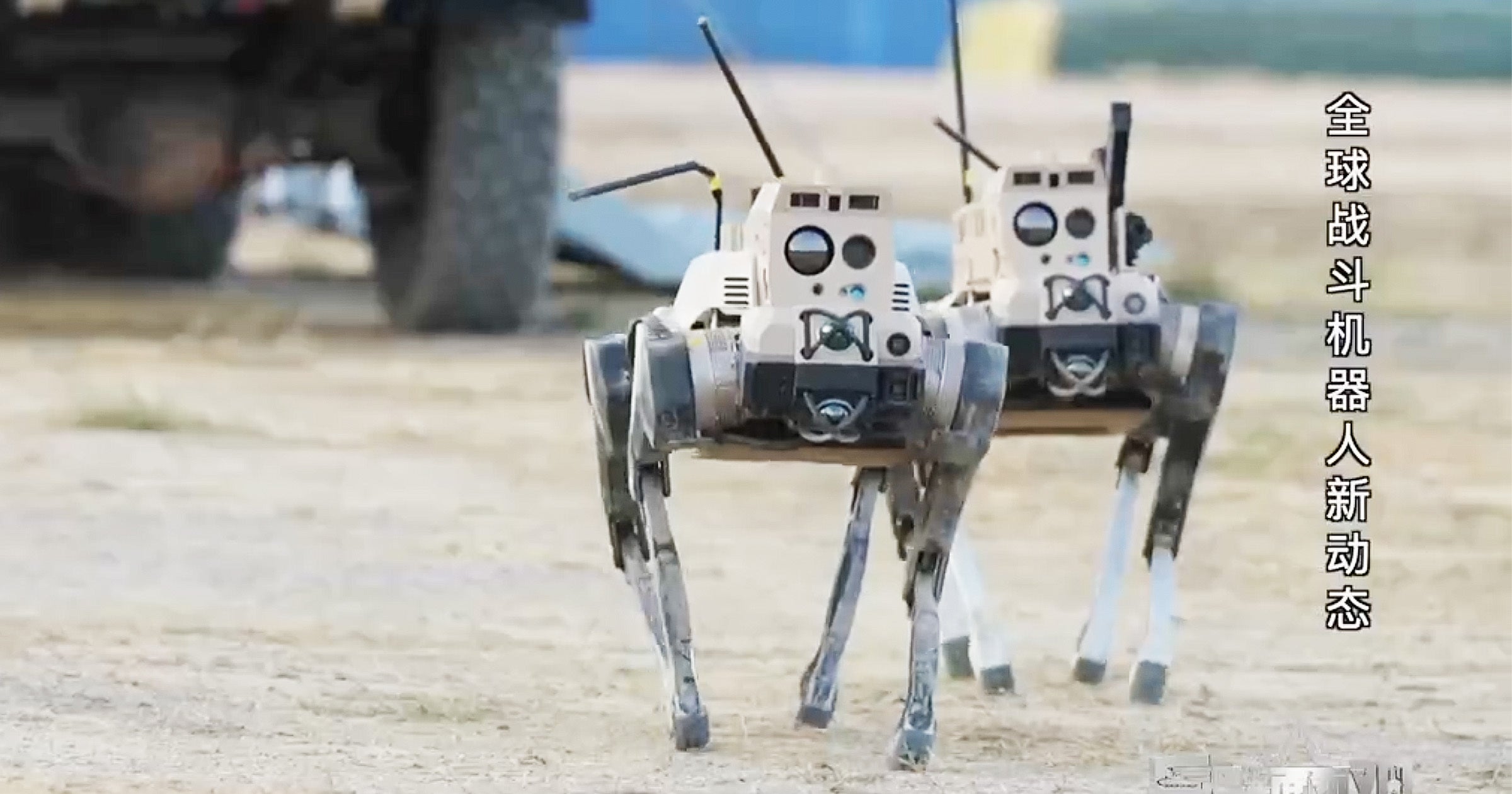

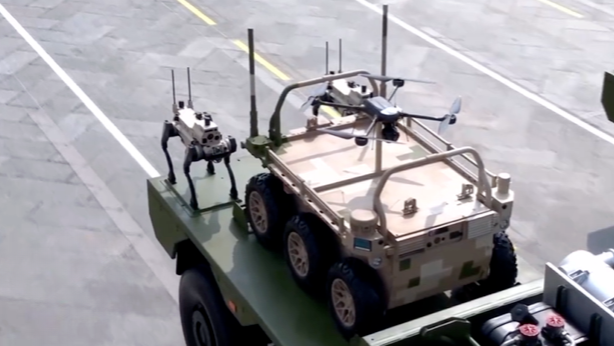

China has publicly showcased a new generation of AI-powered robotic quadrupeds, dubbed 'robotic wolves,' designed to replace human soldiers in dangerous combat scenarios. These autonomous robots can navigate difficult terrain, coordinate in groups, and execute precise lethal actions, raising significant concerns about AI-driven harm in modern warfare.[AI generated]