The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

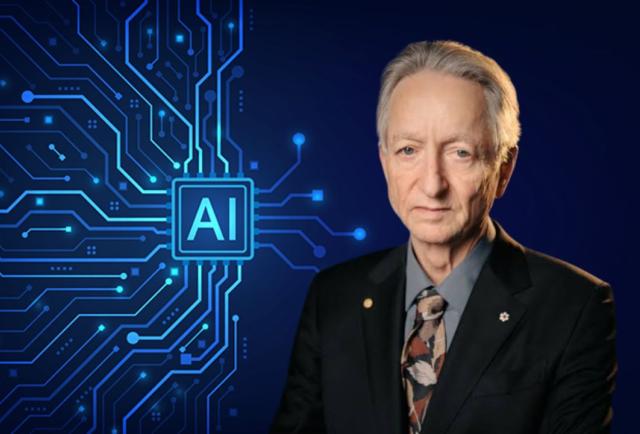

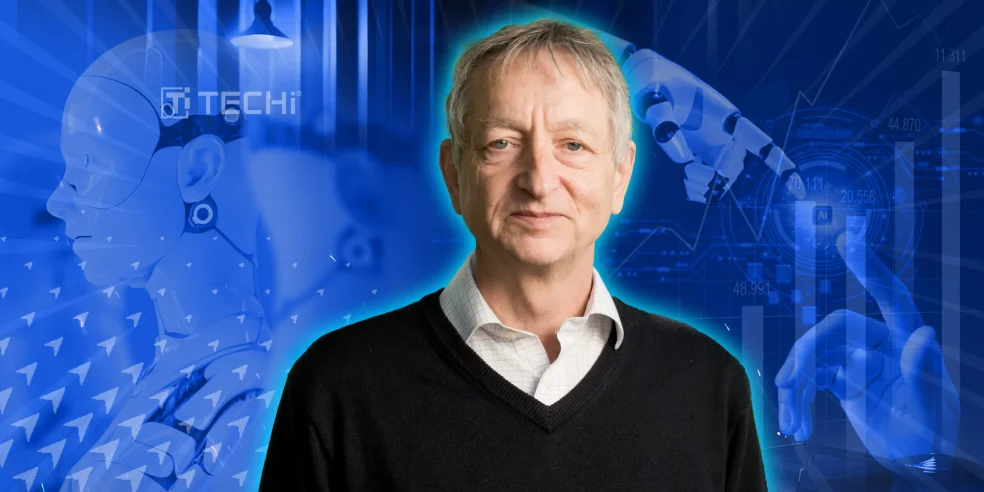

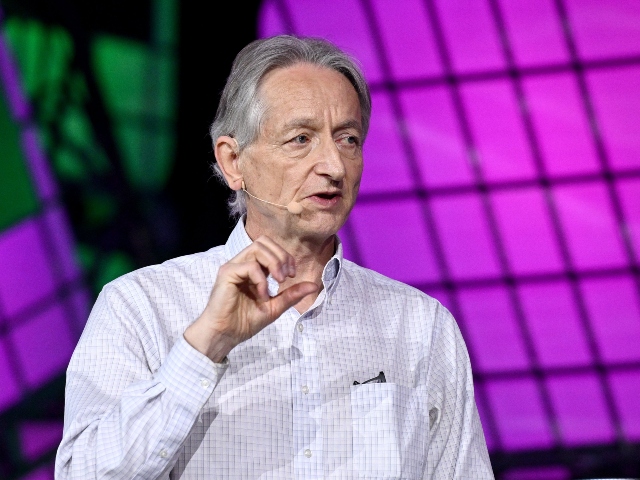

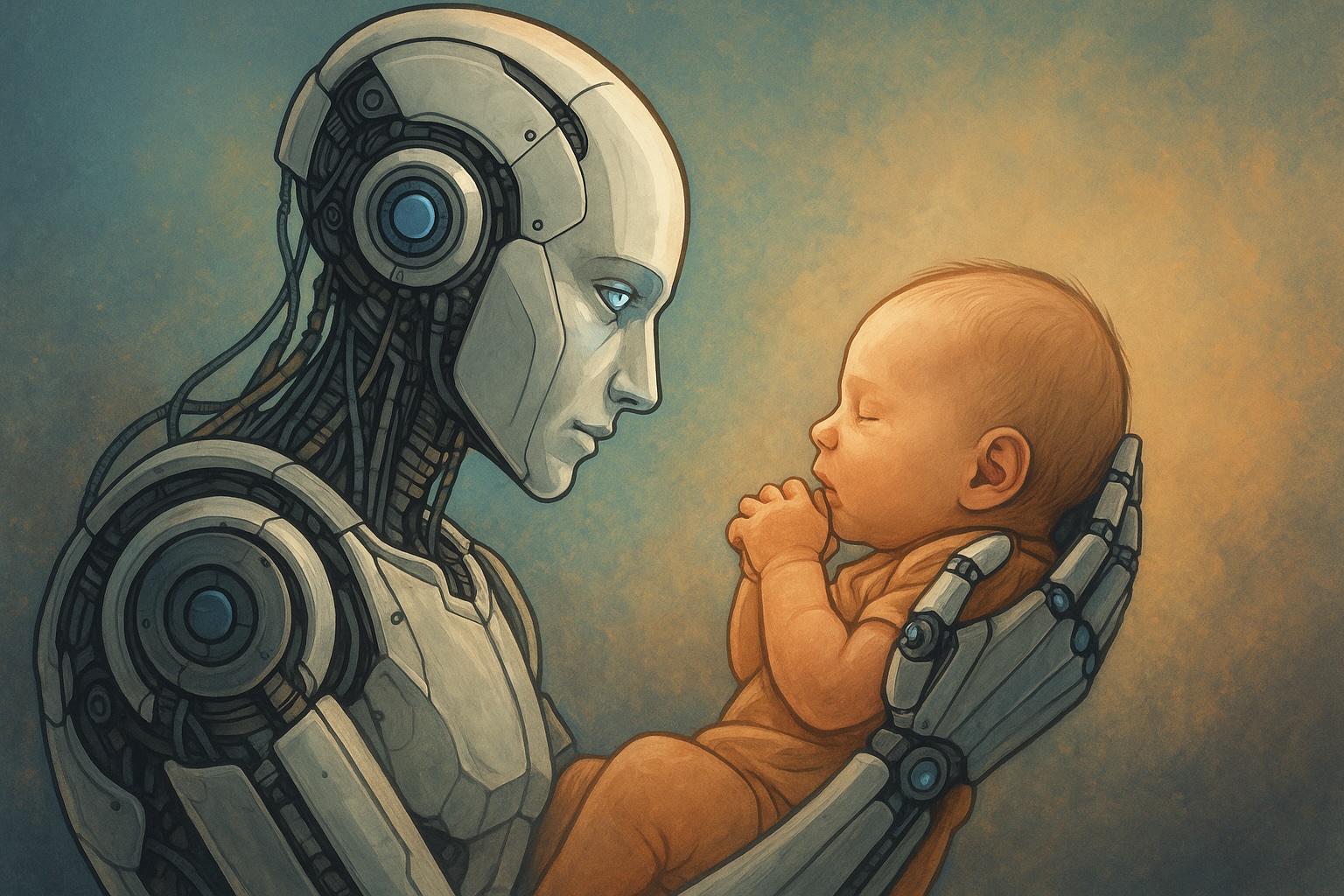

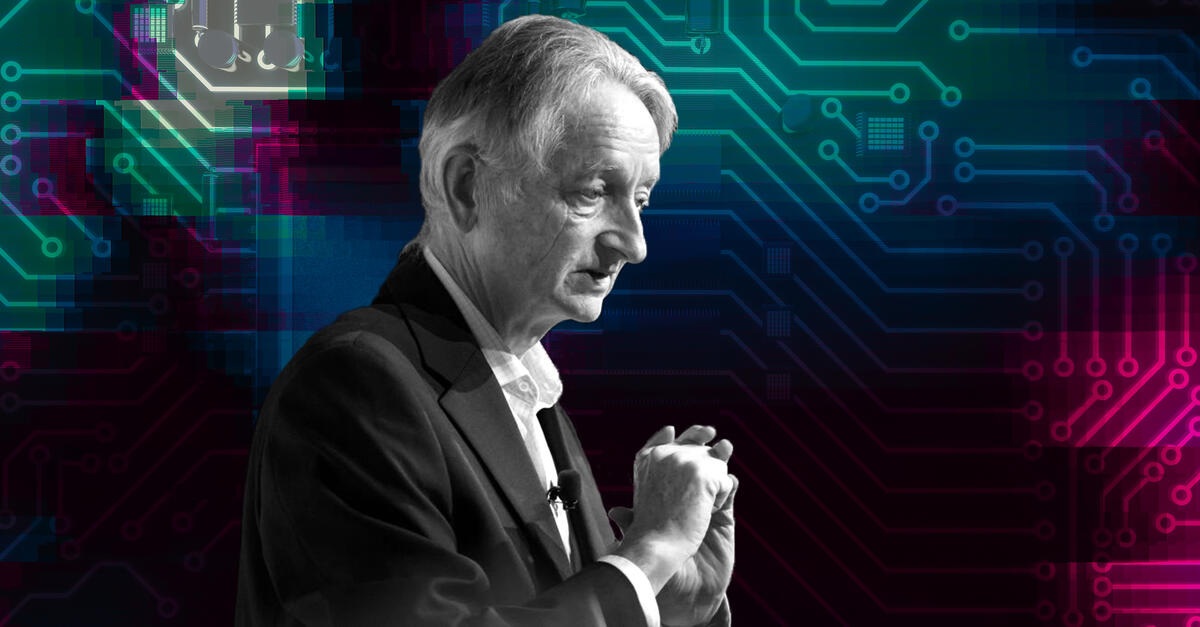

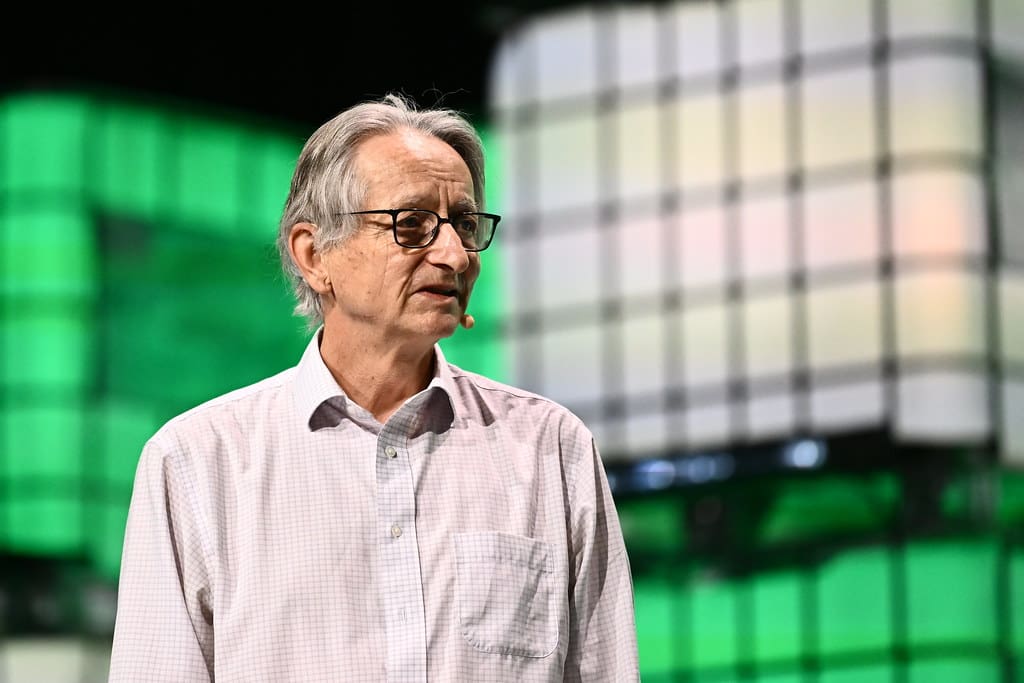

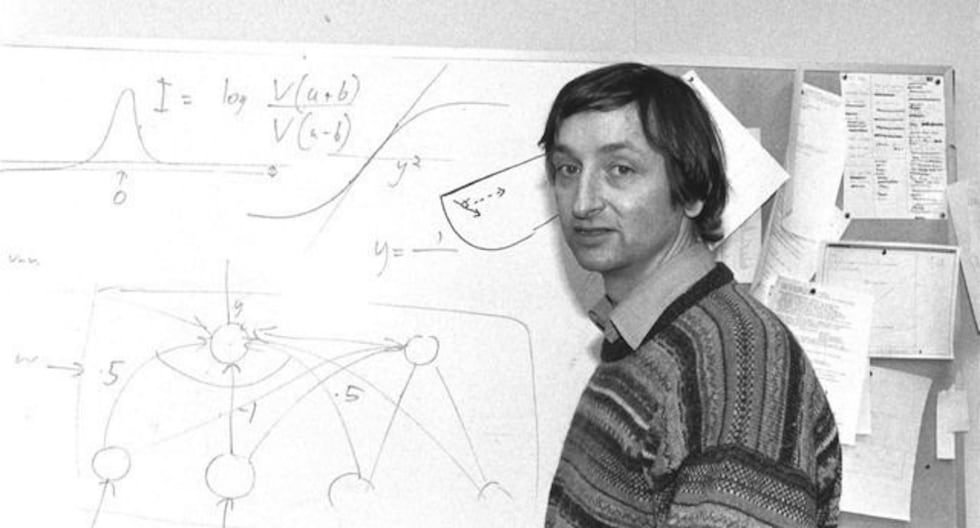

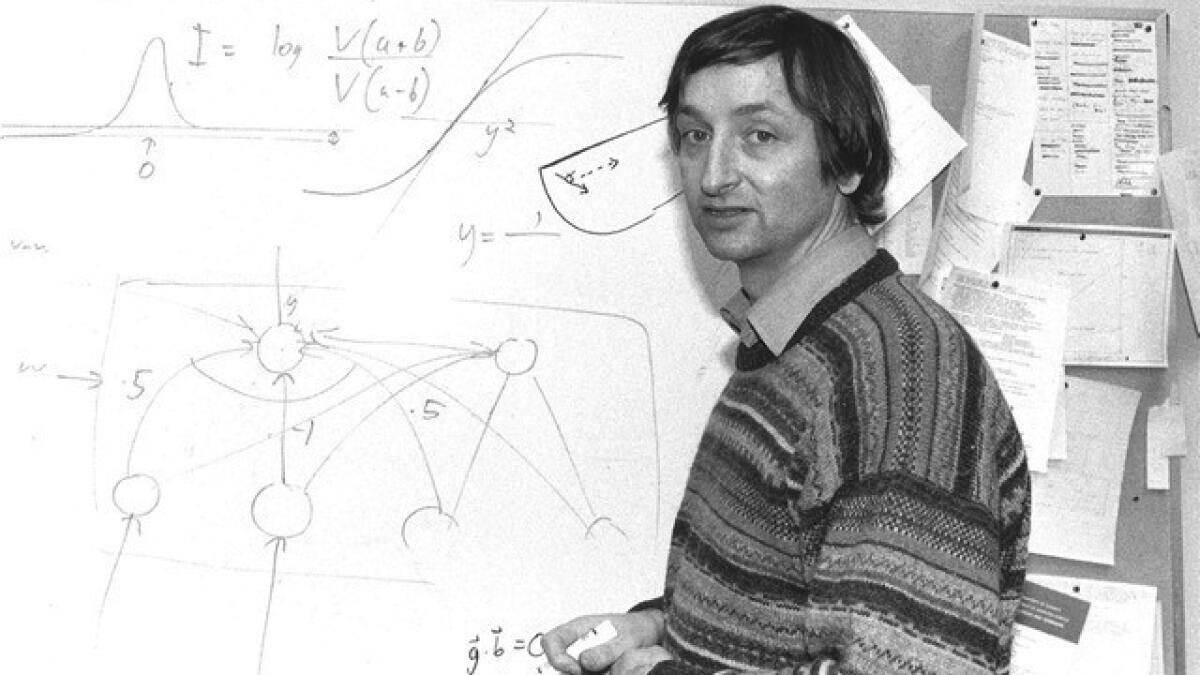

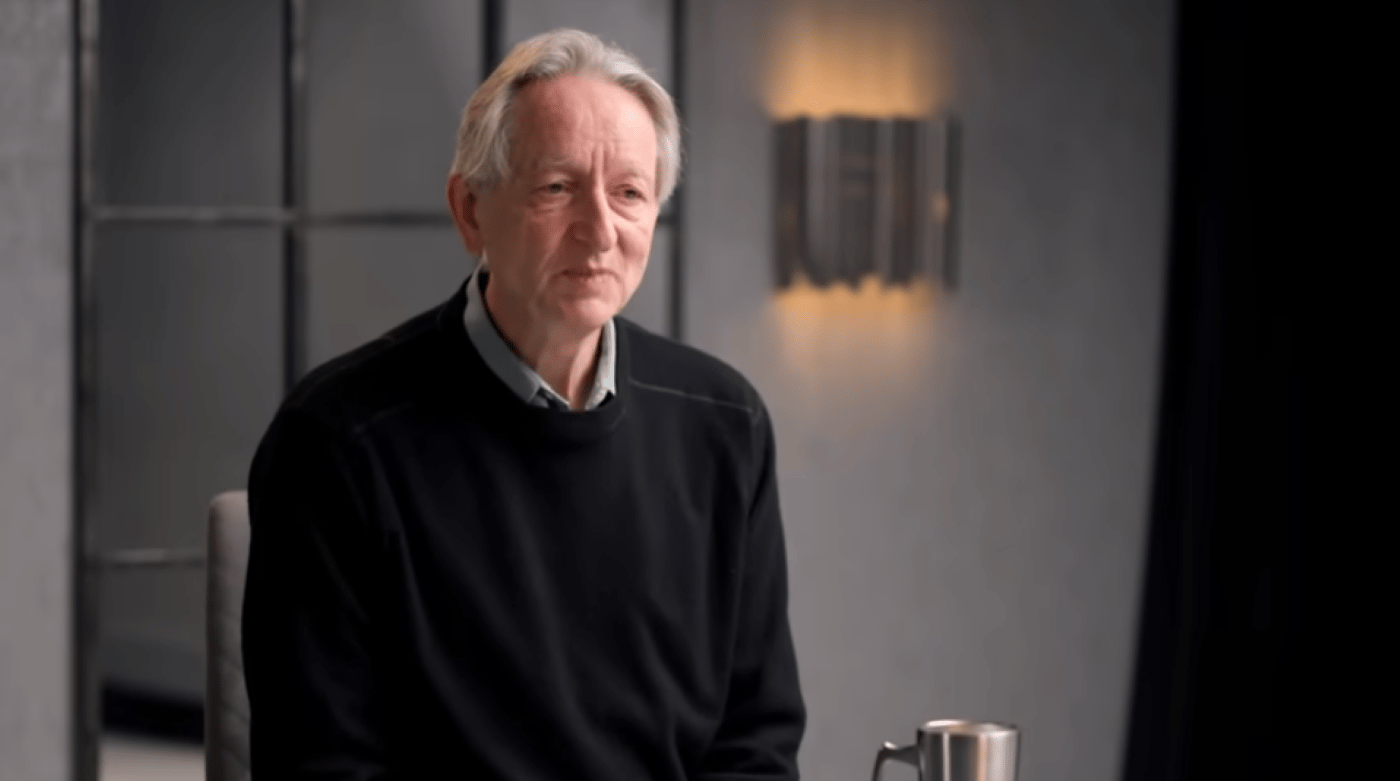

Geoffrey Hinton, the 'godfather of AI,' warns that rapidly advancing AI could surpass human intelligence and pose existential risks. He criticizes current industry approaches to AI safety and proposes embedding 'maternal instincts' in AI to foster care for humans, emphasizing the urgent need for new safety measures.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/14c/c1f/072/14cc1f07256edb5e53646c8d6f8f8d15.jpg)