The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

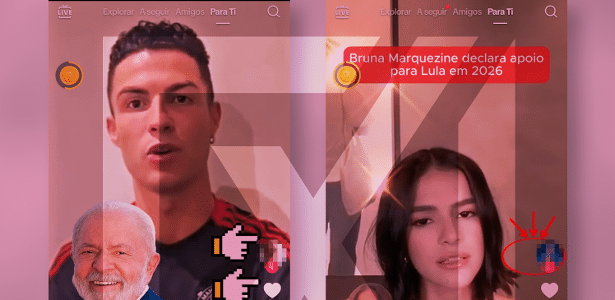

AI-generated deepfake videos on TikTok falsely depict celebrities like Cristiano Ronaldo, Lionel Messi, and Bruna Marquezine endorsing President Lula for Brazil's 2026 election. These manipulated videos, created with deepfake algorithms, spread misinformation and risk misleading the public and influencing political opinions.[AI generated]

Why's our monitor labelling this an incident or hazard?

The use of AI deepfake technology to create realistic but fake videos of celebrities endorsing a political candidate directly involves an AI system (deepfake algorithms). This manipulation can cause harm to communities by spreading misinformation and undermining trust in political processes, fulfilling the criteria for harm to communities. Since the harm is occurring through the dissemination of these videos, this qualifies as an AI Incident rather than a hazard or complementary information.[AI generated]