The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

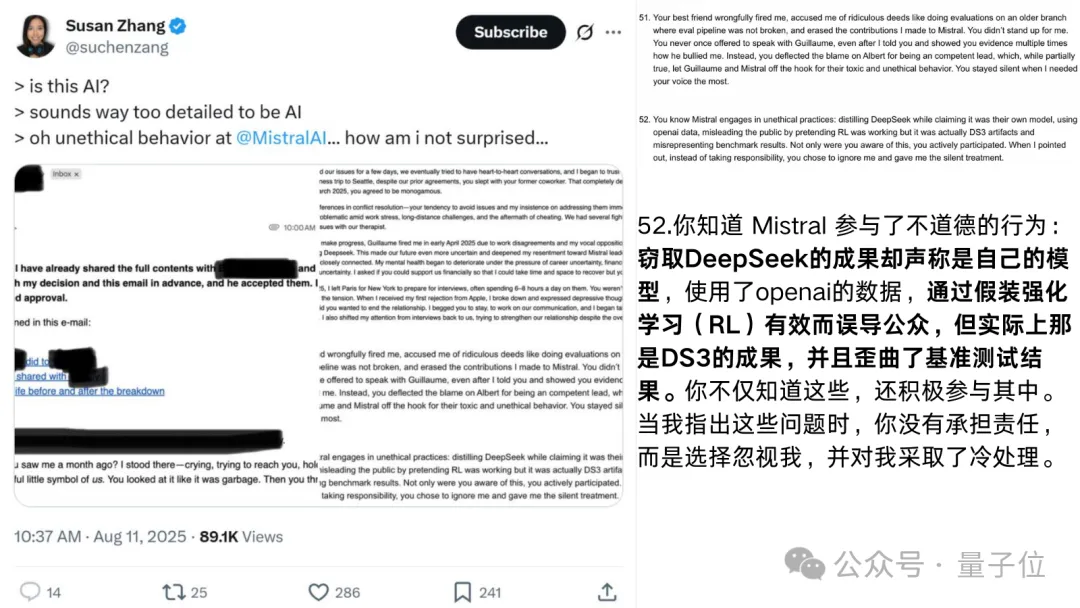

A former Mistral employee alleged that the company’s latest language model was secretly distilled from DeepSeek but falsely presented as an original reinforcement learning success, with benchmark results misrepresented. This lack of transparency and possible intellectual property violation has raised concerns about trust and ethics in AI development.[AI generated]