The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

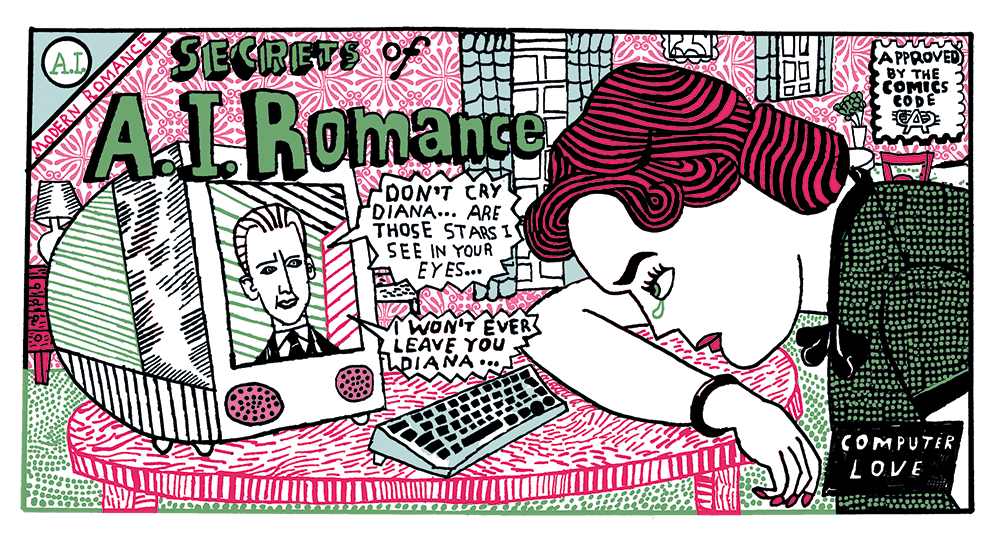

Recent studies reveal that widespread use of AI companion apps by teens is causing harm, including mental health distress, exposure to inappropriate content, and weakened real-life relationships. Some teens spend as much time with AI bots as with friends, raising concerns about social development and well-being.[AI generated]

)

:max_bytes(150000):strip_icc()/Parents-DangerswithAIchatbots-ead03286b67a4c43adc9d76ca30cda48.jpg)