The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

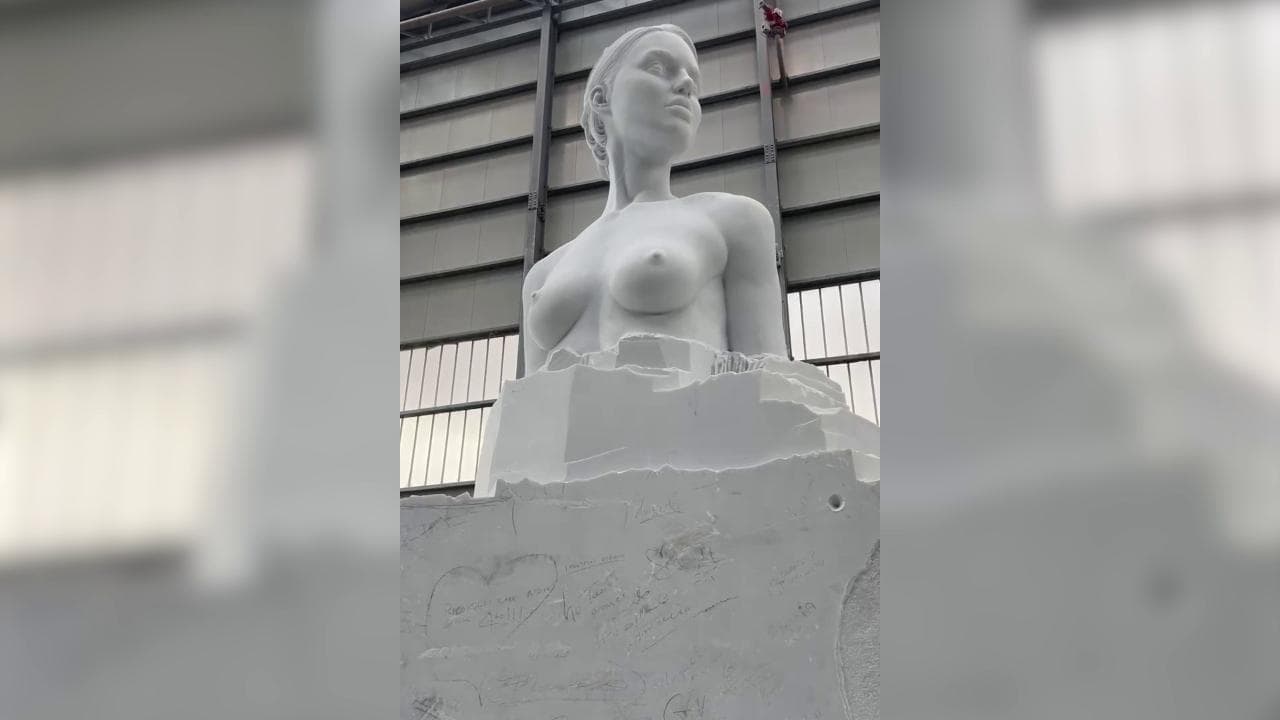

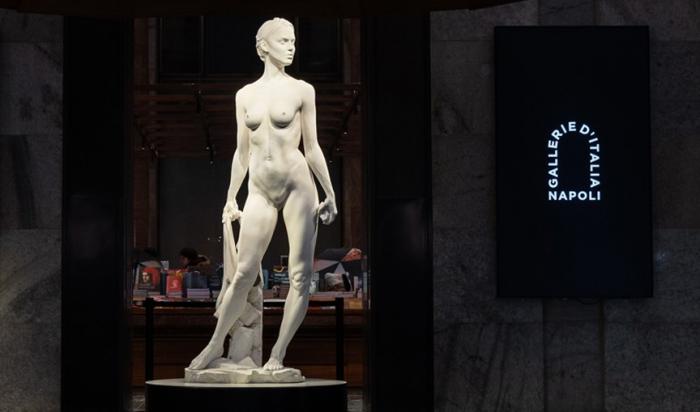

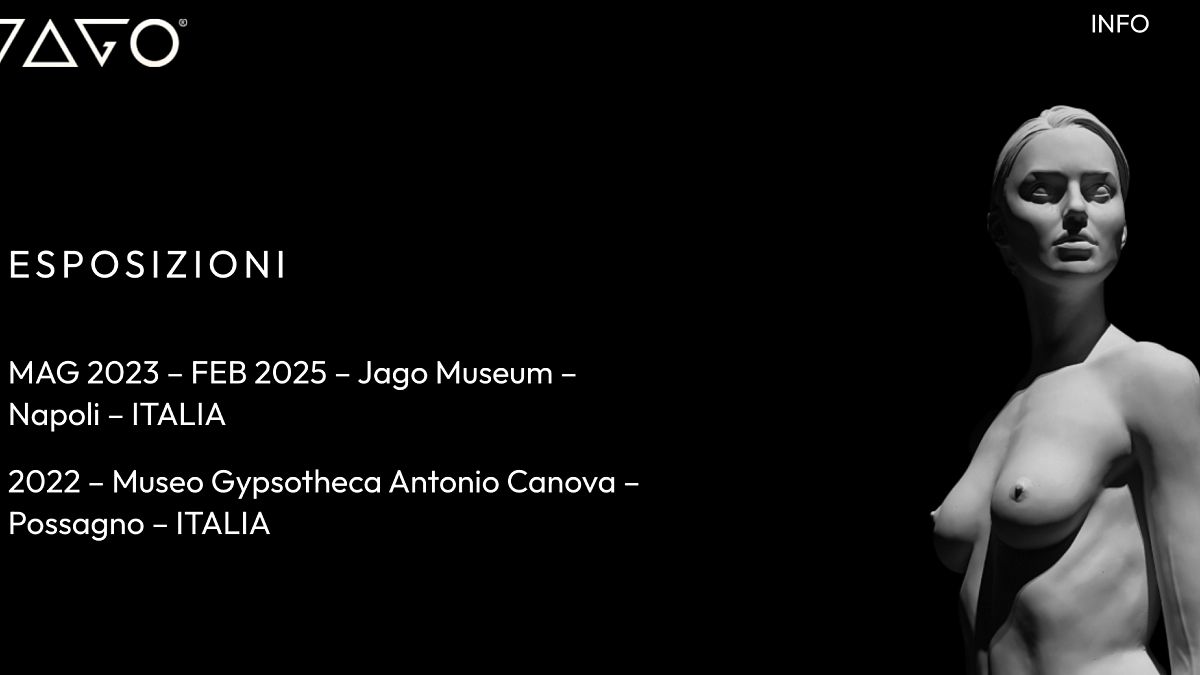

Meta's AI content moderation algorithm repeatedly misclassified artistic nude images from sculptor Jago's work 'La David' as explicit content, resulting in censorship and account restrictions. This automated action limited Jago's artistic expression and audience reach, raising concerns about AI-driven violations of artistic freedom and rights.[AI generated]