The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

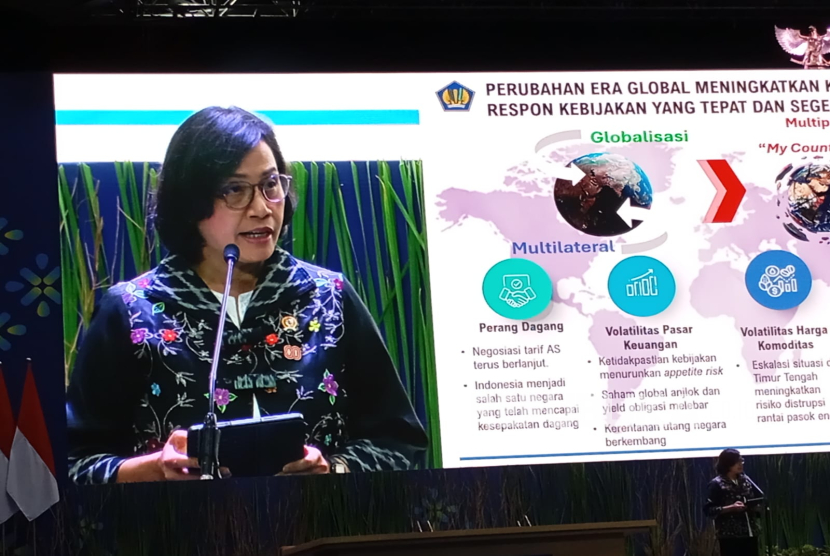

AI-generated deepfake technology was used to fabricate a video falsely showing Indonesia’s Finance Minister Sri Mulyani calling teachers a burden to the state. The viral video caused public outrage and reputational harm before being debunked by officials, highlighting the real-world dangers of AI-driven misinformation.[AI generated]

/data/photo/2025/08/15/689f2cbd2b12d.jpg)

:strip_icc():format(jpeg):watermark(kly-media-production/assets/images/watermarks/liputan6/watermark-color-landscape-new.png,1100,20,0)/kly-media-production/medias/4662573/original/082312300_1700839935-Ketua_Presidium_Mafindo__Septiaji_Eko_Nugroho_dalam_Acara_Seminar_Nasional_bertema__Kolaborasi_Lawan_Disinformasi_Untuk_Pemilu_Damai_pada_Tahun_2024_.__Liputan6.com_.jpg)