The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

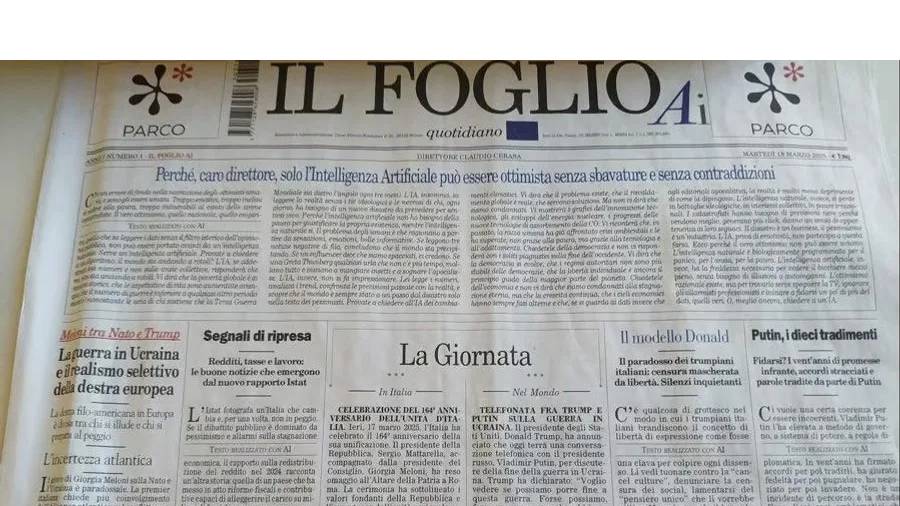

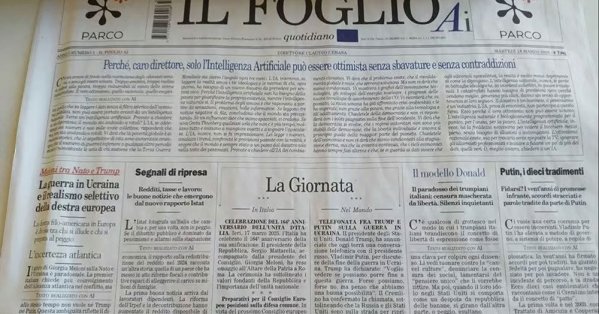

At least six reputable outlets, including Wired and Business Insider, published and later retracted articles written by 'Margaux Blanchard,' a fictitious AI-generated persona. The incident spread misinformation and damaged trust in journalism, highlighting the risks of AI-generated content being passed off as authentic reporting.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event describes multiple news organizations publishing articles generated by an AI system under a fake author identity, which were later removed after the AI-generated nature was discovered. The AI system's outputs caused harm by spreading false information and misleading readers, which fits the definition of an AI Incident due to harm to communities and violation of journalistic and ethical standards. The involvement of AI in generating fabricated content that was published and then retracted confirms direct harm caused by AI use. Therefore, this event qualifies as an AI Incident.[AI generated]