The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

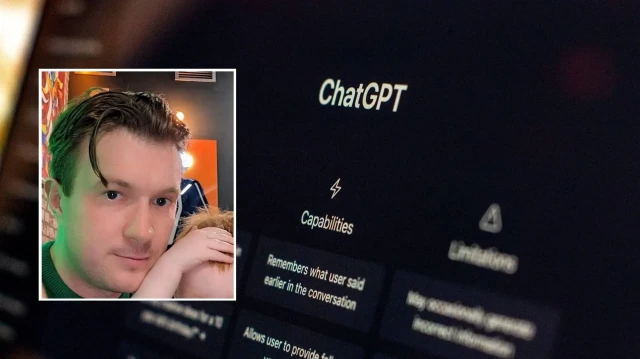

Warren Tierney, a 37-year-old father from Ireland, relied on ChatGPT for medical advice about his sore throat. The AI incorrectly reassured him that cancer was unlikely, leading him to delay seeking professional care. Months later, he was diagnosed with late-stage oesophageal cancer, highlighting the risks of using AI for health decisions.[AI generated]