The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

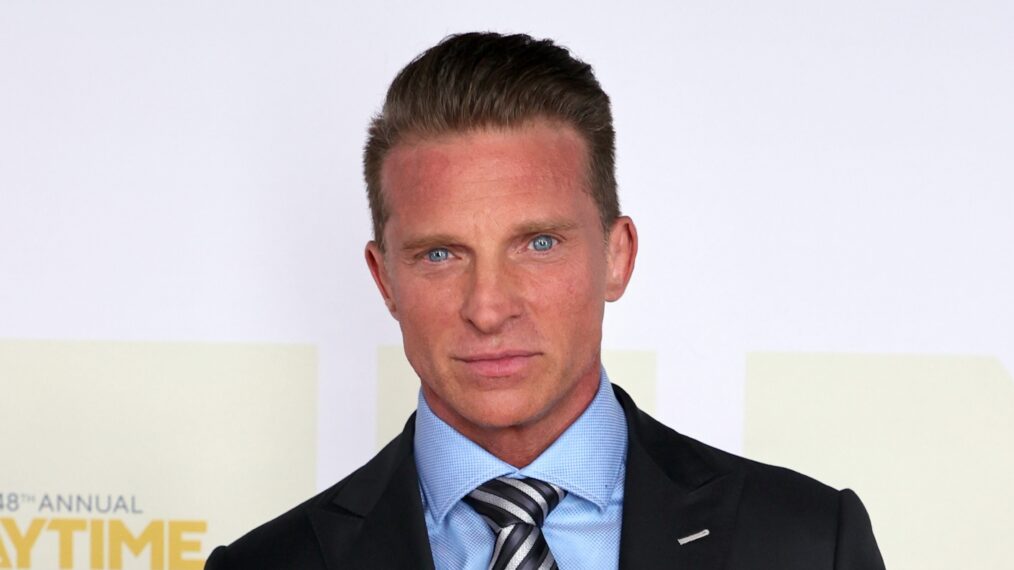

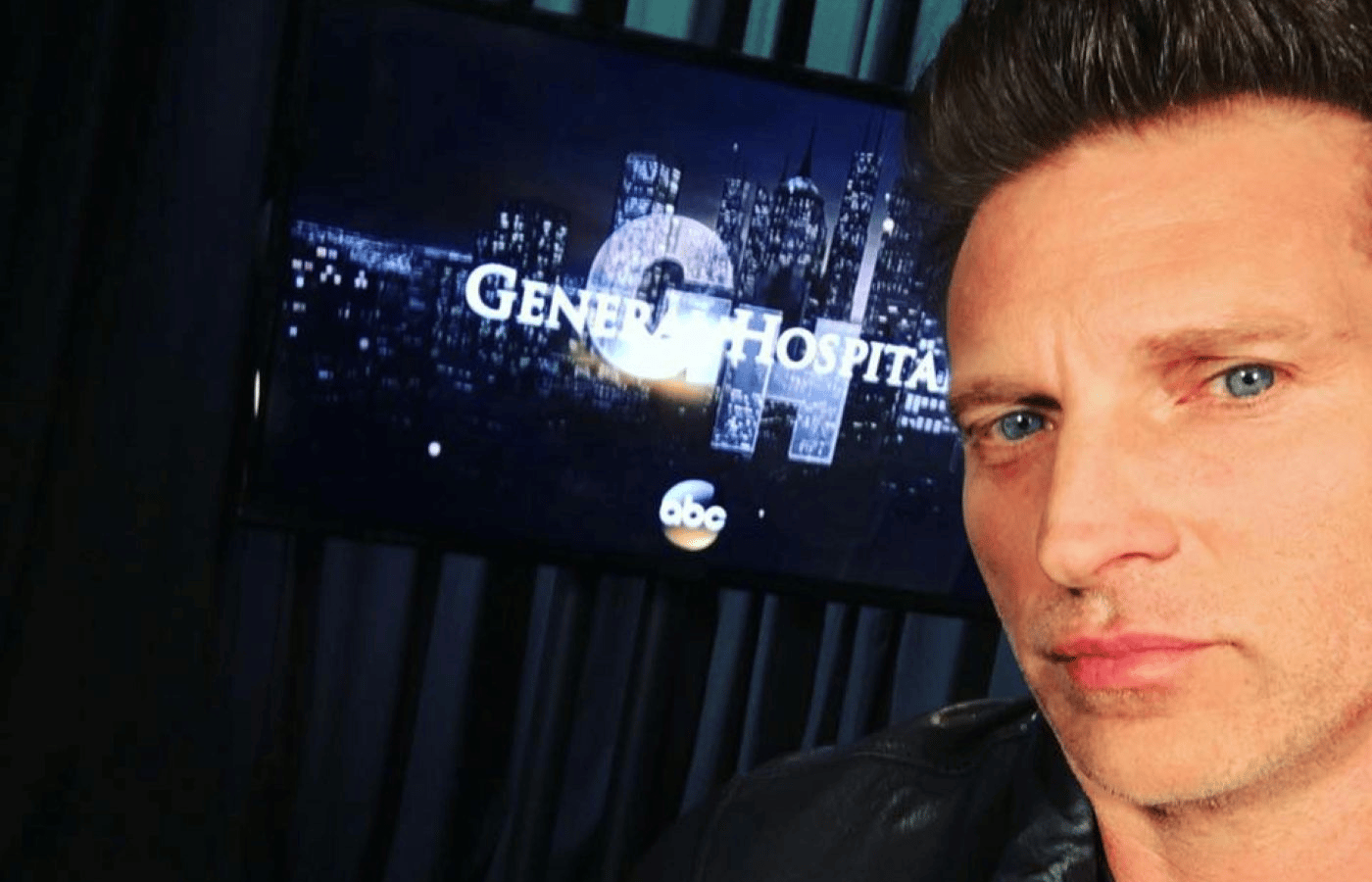

A scammer used AI-generated deepfake videos and voice cloning to impersonate 'General Hospital' actor Steve Burton, deceiving 66-year-old Abigail Ruvalcaba into sending over $150,000 and selling her home. The convincing AI technology enabled the scam, resulting in severe financial and emotional harm to the victim and her family.[AI generated]

:max_bytes(150000):strip_icc():focal(599x0:601x2)/steve-burton-082825-dfa8b93ff2ef436eaf3efa7b09990194.jpg)

:max_bytes(150000):strip_icc():focal(684x330:686x332)/STEVE-BURTON-GENERAL-HOSPITAL-082925-ec76f5bd93024efcb673f305b56f139f.jpg)